The Implementing Analytics Solutions Using Microsoft Fabric (DP-600)

Passing Microsoft Microsoft Certified: Fabric Analytics Engineer Associate exam ensures for the successful candidate a powerful array of professional and personal benefits. The first and the foremost benefit comes with a global recognition that validates your knowledge and skills, making possible your entry into any organization of your choice.

Why CertAchieve is Better than Standard DP-600 Dumps

In 2026, Microsoft uses variable topologies. Basic dumps will fail you.

| Quality Standard | Generic Dump Sites | CertAchieve Premium Prep |

|---|---|---|

| Technical Explanation | None (Answer Key Only) | Step-by-Step Expert Rationales |

| Syllabus Coverage | Often Outdated (v1.0) | 2026 Updated (Latest Syllabus) |

| Scenario Mastery | Blind Memorization | Conceptual Logic & Troubleshooting |

| Instructor Access | No Post-Sale Support | 24/7 Professional Help |

Success backed by proven exam prep tools

Real exam match rate reported by verified users

Consistently high performance across certifications

Efficient prep that reduces study hours significantly

Coverage of Official Microsoft DP-600 Exam Domains

Our curriculum is meticulously mapped to the Microsoft official blueprint.

Plan, Implement, and Manage a Solution for Data Analytics (15%)

The "Governance" layer. Master the administration of the Fabric tenant and capacity. Focus on workspace management, implementing security (Row-level and Object-level), and the Lifecycle Management of analytics assets using Git integration and Deployment Pipelines. Learn to balance performance and cost within the Fabric capacity (F-SKUs).

Prepare and Serve Data (45%)

The "Engineering" core. This is the highest-weighted domain. Master the ingestion and transformation of data using Data Factory (Dataflows Gen2 and Pipelines) and Synapse Data Engineering (Spark/Notebooks). Focus on the Medallion Architecture (Bronze, Silver, Gold), loading data into a Lakehouse or Warehouse, and utilizing T-SQL or PySpark for complex transformations within OneLake.

Implement and Manage Semantic Models (25%)

The "Intelligence" engine. Master the creation of sophisticated semantic models. Focus heavily on Direct Lake mode—the revolutionary Fabric feature that bypasses the need for data imports or DirectQuery. Learn to optimize model performance, write complex DAX expressions, and manage large-scale models using the XMLA endpoint and Tabular Editor.

Explore and Analyze Data (25%)

The "Insights" layer. Master data exploration using SQL analytics endpoints and KQL databases. Focus on creating impactful visualizations in Power BI, utilizing Copilot for Fabric to automate report creation, and identifying trends through exploratory data analysis (EDA). Understand how to leverage Fabric's "built-in" AI capabilities to deliver predictive value to stakeholders.

Microsoft DP-600 Exam Domains Q&A

Certified instructors verify every question for 100% accuracy, providing detailed, step-by-step explanations for each.

QUESTION DESCRIPTION:

You have a Fabric workspace named Workspace1.

You need to create a semantic model named Model1 and publish Model1 to Workspace1. The solution must meet the following requirements:

Can revert to previous versions of Model1 as required.

Identifies differences between saved versions of Model1.

Uses Microsoft Power BI Desktop to publish to Workspace1.

Can edit item definition files by using Microsoft Visual Studio Code.

Which two actions should you perform? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

Correct Answer & Rationale:

Answer: A, C

Explanation:

Requirements:

Revert to previous versions of Model1.

Identify differences between saved versions.

Publish from Power BI Desktop.

Edit item definition files with Visual Studio Code.

Analysis:

To meet version control and difference tracking requirements, you must use Git integration in the Fabric workspace.

To enable editing with VS Code, you must use PBIP format (Power BI Project), which saves report/model as a folder with definition JSON files (not PBIX).

PBIX is a single binary file, not suitable for Git versioning or editing definition files.

" Enable users to edit in service " is irrelevant.

Correct Answers:

A. Enable Git integration for Workspace1.

C. Save Model1 in Power BI Desktop as a PBIP file.

QUESTION DESCRIPTION:

You have a Microsoft Power Bl semantic model that contains measures. The measures use multiple calculate functions and a filter function.

You are evaluating the performance of the measures.

In which use case will replacing the filter function with the keepfilters function reduce execution time?

Correct Answer & Rationale:

Answer: B

Explanation:

The KEEPFILTERS function modifies the way filters are applied in calculations done through the CALCULATE function. It can be particularly beneficial to replace the FILTER function with KEEPFILTERS when the filter context is being overridden by nested CALCULATE functions, which may remove filters that are being applied on a column. This can potentially reduce execution time because KEEPFILTERS maintains the existing filter c ontext and allows the nested CALCULATE functions to be evaluated more efficiently.

QUESTION DESCRIPTION:

You have a Fabric tenant that contains a warehouse.

A user discovers that a report that usually takes two minutes to render has been running for 45 minutes and has still not rendered.

You need to identify what is preventing the report query from completing.

Which dynamic management view (DMV) should you use?

Correct Answer & Rationale:

Answer: C

Explanation:

The correct DMV to identify what is preventing the report query from completing is sys.dm_pdw_exec_requests (D). This DMV is specific to Microsoft Analytics Platform System (previously known as SQL Data Warehouse), which is the environment assumed to be used here. It provides information about all queries and load commands currently running or that have recently run. References = You can find more about DMVs in the Microsoft documentation for Analytics Platform System.

QUESTION DESCRIPTION:

You have a Microsoft Power Bl report named Report1 that uses a Fabric semantic model.

Users discover that Report1 renders slowly.

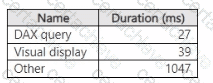

You open Performance analyzer and identify that a visual named Orders By Date is the slowest to render. The duration break down for Orders By Date is shown in the following table.

What will provide the greatest reduction in the rendering duration of Report1?

Correct Answer & Rationale:

Answer: D

Explanation:

Based on the duration breakdown provided, the major contributor to the rendering duration is categorized as " Other, " which is significantly higher than DAX Query and Visua l display times. This suggests that the issue is less likely with the DAX calculation or visual rendering times and more likely related to model performance or the complexity of the visual. However, of the options provided, optimizing the DAX query can be a crucial step, even if " Other " factors are dominant. Using DAX Studio, you can analyze and optimize the DAX queries that power your visuals for performance improvements. Here’s how you might proceed:

Open DAX Studio and connect it to your Power BI report.

Capture the DAX query generated by the Orders By Date visual.

Use the Performance Analyzer feature within DAX Studio to analyze the query.

Look for inefficiencies or long-running operations.

Optimize the DAX query by simplifying measures, removing unnecessary calculations, or improving iterator functions.

Test the optimized query to ensure it reduces the overall duration.

QUESTION DESCRIPTION:

Note: This section contains one or more sets of questions with the same scenario and problem. Each question presents a unique solution to the problem. You must determine whether the solution meets the stated goals. More than one solution in the set might solve the problem. It is also possible that none of the solutions in the set solve the problem.

After you answer a question in this section, you will NOT be able to return. As a result, these questions do not appear on the Review Screen.

Your network contains an on-premises Active Directory Domain Services (AD DS) domain named contoso.com that syncs with a Microsoft Entra tenant by using Microsoft Entra Connect.

You have a Fabric tenant that contains a semantic model.

You enable dynamic row-level security (RLS) for the model and deploy the model to the Fabric service.

You query a measure that includes the username () function, and the query returns a blank result.

You need to ensure that the measure returns the user principal name (UPN) of a user.

Solution: You update the measure to use the USEROBJECT () function.

Does this meet the goal?

Correct Answer & Rationale:

Answer: B

Explanation:

There is no USEROBJECT() function in DAX. The correct functions available are USERNAME() and USERPRINCIPALNAME() .

USERPRINCIPALNAME() is the one that returns the UPN directly.

Since the solution refers to a non-existent function ( USEROBJECT() ), it cannot solve the problem.

Correct approach: Update the measure to use USERPRINCIPALNAME() , not USEROBJECT() .

QUESTION DESCRIPTION:

You have a Fabric tenant that contains a lakehouse.

You plan to query sales data files by using the SQL endpoint. The files will be in an Amazon Simple Storage Service (Amazon S3) storage bucket.

You need to recommend which file format to use and where to create a shortcut.

Which two actions should you include in the recommendation? Each correct answer presents part of the solution.

NOTE: Each correct answer is worth one point.

Correct Answer & Rationale:

Answer: C, D

Explanation:

You should use the Parquet format (B) for the sales data files because it is optimized for performance with large datasets in analytical processing and create a shortcut in the Tables secti on (D) to facilitate SQL queries through the lakehouse ' s SQL endpoint. References = The best practices for working with file formats and shortcuts in a lakehouse environment are covered in the lakehouse and SQL endpoint documentation provided by the cloud data platform services.

QUESTION DESCRIPTION:

You have a Microsoft Power Bl project that contains a semantic model. You plan to use Azure DevOps for version control.

You need to modify the .gitignore file to prevent the data values from the data sources from being pushed to the repository. Which file should you reference?

Correct Answer & Rationale:

Answer: D

Explanation:

When using Power BI Project (.pbip) with Git integration:

The .pbip project separates model metadata (schema, measures, relationships, etc.) from actual data values .

The localSettings.json file stores machine-specific and environment-specific settings , including data source credentials and local configurations . These should not be checked into version control to avoid exposing sensitive information or environment-specific configs.

Other options:

unappliedChanges.json → tracks unsaved changes, not data values.

model.bim → defines the semantic model schema (measures, relationships, metadata) and must be included in version control.

cache.abf → stores Analysis Services cache data, not part of source control.

Therefore, the correct file to exclude is localSettings.json .

QUESTION DESCRIPTION:

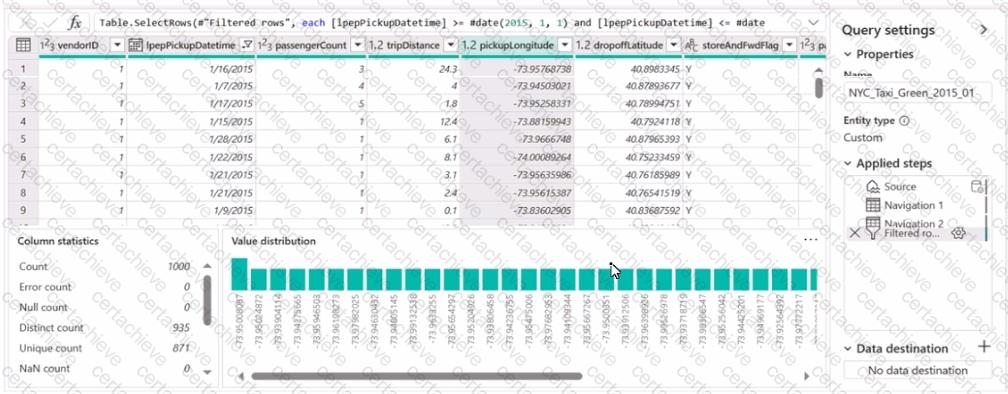

You have a Fabric workspace named Workspace 1 that contains a dataflow named Dataflow1. Dataflow1 has a query that returns 2.000 rows. You view the query in Power Query as shown in the following exhibit.

What can you identify about the pickupLongitude column?

Correct Answer & Rationale:

Answer: A

Explanation:

The pickupLon gitude column has duplicate values. This can be inferred because the ' Distinct count ' is 935 while the ' Count ' is 1000, indicating that there are repeated values within the column. References = Microsoft Power BI documentation on data profiling could provi de further insights into understanding and interpreting column statistics like these.

QUESTION DESCRIPTION:

You have a Fabric tenant that uses a Microsoft tower Bl Premium capacity. You need to enable scale-out for a semantic model. What should you do first?

Correct Answer & Rationale:

Answer: C

Explanation:

To enable scale-out for a semantic model, you should first set Large dataset storage format to On (C) at the semantic model level. This configuration is necessary to handle larger datasets effectively in a sc aled-out environment. References = Guidance on configuring large dataset storage formats for scale-out is available in the Power BI documentation.

QUESTION DESCRIPTION:

You need to ensure the data loading activities in the AnalyticsPOC workspace are executed in the appropriate sequence. The solution must meet the technical requirements.

What should you do?

Correct Answer & Rationale:

Answer: A

Explanation:

To meet the technical requirement that data loading activities must ensure the raw and cleansed data is updated completely before populating the dimensional model, you would need a mechanism that allows for ordered execution. A pipeline in Microsoft Fabric with dependencies set between activities can ensure that activities are executed in a specific sequence. Once set up, the pipeline can be scheduled to run at the required intervals (hourly or daily depending on the data source).

A Stepping Stone for Enhanced Career Opportunities

Your profile having Microsoft Certified: Fabric Analytics Engineer Associate certification significantly enhances your credibility and marketability in all corners of the world. The best part is that your formal recognition pays you in terms of tangible career advancement. It helps you perform your desired job roles accompanied by a substantial increase in your regular income. Beyond the resume, your expertise imparts you confidence to act as a dependable professional to solve real-world business challenges.

Your success in Microsoft DP-600 certification exam makes your visible and relevant in the fast-evolving tech landscape. It proves a lifelong investment in your career that give you not only a competitive advantage over your non-certified peers but also makes you eligible for a further relevant exams in your domain.

What You Need to Ace Microsoft Exam DP-600

Achieving success in the DP-600 Microsoft exam requires a blending of clear understanding of all the exam topics, practical skills, and practice of the actual format. There's no room for cramming information, memorizing facts or dependence on a few significant exam topics. It means your readiness for exam needs you develop a comprehensive grasp on the syllabus that includes theoretical as well as practical command.

Here is a comprehensive strategy layout to secure peak performance in DP-600 certification exam:

- Develop a rock-solid theoretical clarity of the exam topics

- Begin with easier and more familiar topics of the exam syllabus

- Make sure your command on the fundamental concepts

- Focus your attention to understand why that matters

- Ensure hands-on practice as the exam tests your ability to apply knowledge

- Develop a study routine managing time because it can be a major time-sink if you are slow

- Find out a comprehensive and streamlined study resource for your help

Ensuring Outstanding Results in Exam DP-600!

In the backdrop of the above prep strategy for DP-600 Microsoft exam, your primary need is to find out a comprehensive study resource. It could otherwise be a daunting task to achieve exam success. The most important factor that must be kep in mind is make sure your reliance on a one particular resource instead of depending on multiple sources. It should be an all-inclusive resource that ensures conceptual explanations, hands-on practical exercises, and realistic assessment tools.

Certachieve: A Reliable All-inclusive Study Resource

Certachieve offers multiple study tools to do thorough and rewarding DP-600 exam prep. Here's an overview of Certachieve's toolkit:

Microsoft DP-600 PDF Study Guide

This premium guide contains a number of Microsoft DP-600 exam questions and answers that give you a full coverage of the exam syllabus in easy language. The information provided efficiently guides the candidate's focus to the most critical topics. The supportive explanations and examples build both the knowledge and the practical confidence of the exam candidates required to confidently pass the exam. The demo of Microsoft DP-600 study guide pdf free download is also available to examine the contents and quality of the study material.

Microsoft DP-600 Practice Exams

Practicing the exam DP-600 questions is one of the essential requirements of your exam preparation. To help you with this important task, Certachieve introduces Microsoft DP-600 Testing Engine to simulate multiple real exam-like tests. They are of enormous value for developing your grasp and understanding your strengths and weaknesses in exam preparation and make up deficiencies in time.

These comprehensive materials are engineered to streamline your preparation process, providing a direct and efficient path to mastering the exam's requirements.

Microsoft DP-600 exam dumps

These realistic dumps include the most significant questions that may be the part of your upcoming exam. Learning DP-600 exam dumps can increase not only your chances of success but can also award you an outstanding score.

Microsoft DP-600 Microsoft Certified: Fabric Analytics Engineer Associate FAQ

There are only a formal set of prerequisites to take the DP-600 Microsoft exam. It depends of the Microsoft organization to introduce changes in the basic eligibility criteria to take the exam. Generally, your thorough theoretical knowledge and hands-on practice of the syllabus topics make you eligible to opt for the exam.

It requires a comprehensive study plan that includes exam preparation from an authentic, reliable and exam-oriented study resource. It should provide you Microsoft DP-600 exam questions focusing on mastering core topics. This resource should also have extensive hands on practice using Microsoft DP-600 Testing Engine.

Finally, it should also introduce you to the expected questions with the help of Microsoft DP-600 exam dumps to enhance your readiness for the exam.

Like any other Microsoft Certification exam, the Microsoft Certified: Fabric Analytics Engineer Associate is a tough and challenging. Particularly, it's extensive syllabus makes it hard to do DP-600 exam prep. The actual exam requires the candidates to develop in-depth knowledge of all syllabus content along with practical knowledge. The only solution to pass the exam on first try is to make sure diligent study and lab practice prior to take the exam.

The DP-600 Microsoft exam usually comprises 100 to 120 questions. However, the number of questions may vary. The reason is the format of the exam that may include unscored and experimental questions sometimes. Mostly, the actual exam consists of various question formats, including multiple-choice, simulations, and drag-and-drop.

It actually depends on one's personal keenness and absorption level. However, usually people take three to six weeks to thoroughly complete the Microsoft DP-600 exam prep subject to their prior experience and the engagement with study. The prime factor is the observation of consistency in studies and this factor may reduce the total time duration.

Yes. Microsoft has transitioned to v1.1, which places more weight on Network Automation, Security Fundamentals, and AI integration. Our 2026 bank reflects these specific updates.

Standard dumps rely on pattern recognition. If Microsoft changes a single IP address in a topology, memorized answers fail. Our rationales teach you the logic so you can solve the problem regardless of the phrasing.

Top Exams & Certification Providers

New & Trending

- New Released Exams

- Related Exam

- Hot Vendor