The Qlik Sense Data Architect Certification Exam – 2024 (QSDA2024)

Passing Qlik Qlik Sense Data Architect exam ensures for the successful candidate a powerful array of professional and personal benefits. The first and the foremost benefit comes with a global recognition that validates your knowledge and skills, making possible your entry into any organization of your choice.

Why CertAchieve is Better than Standard QSDA2024 Dumps

In 2026, Qlik uses variable topologies. Basic dumps will fail you.

| Quality Standard | Generic Dump Sites | CertAchieve Premium Prep |

|---|---|---|

| Technical Explanation | None (Answer Key Only) | Step-by-Step Expert Rationales |

| Syllabus Coverage | Often Outdated (v1.0) | 2026 Updated (Latest Syllabus) |

| Scenario Mastery | Blind Memorization | Conceptual Logic & Troubleshooting |

| Instructor Access | No Post-Sale Support | 24/7 Professional Help |

Success backed by proven exam prep tools

Real exam match rate reported by verified users

Consistently high performance across certifications

Efficient prep that reduces study hours significantly

Qlik QSDA2024 Exam Domains Q&A

Certified instructors verify every question for 100% accuracy, providing detailed, step-by-step explanations for each.

QUESTION DESCRIPTION:

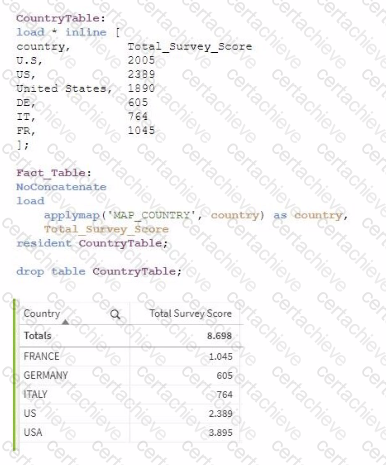

Refer to the exhibits.

On executing a load script of an app, the country field needs to be normalized. The developer uses a mapping table to address the issue. The script runs successfully but the resulting table is not correct.

What should the data architect do?

Correct Answer & Rationale:

Answer: D

Explanation:

In this scenario, the issue arises from using the applymap() function to normalize the country field values, but the result is incorrect. The reason is most likely related to the values in the source mapping table not matching the values in the Fact_Table properly.

The applymap() function in Qlik Sense is designed to map one field to another using a mapping table. If the source values in the mapping table are inconsistent or incorrect, the applymap() will not function as expected, leading to incorrect results.

Steps to resolve:

Review the mapping table (MAP_COUNTRY) : The country field in the CountryTable contains values such as "U.S.", "US", and "United States" for the same country. To correctly normalize the country names, you need to ensure that all variations of a country's name are consistently mapped to a single value (e.g., "USA").

Apply Mapping : Review and clean up the mapping table so that all possible variants of a country are correctly mapped to the desired normalized value.

Key References:

Mapping Tables in Qlik Sense : Mapping tables allow you to substitute field values with mapped values. Any mismatches or variations in source values should be thoroughly reviewed.

Applymap() Function : This function takes a mapping table and applies it to substitute a field value with its mapped equivalent. If the mapped values are not correct or incomplete, the output will not be as expected.

QUESTION DESCRIPTION:

Exhibit

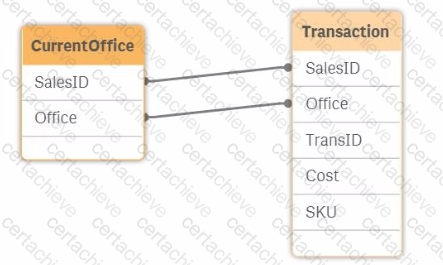

Refer to the exhibit.

The salesperson ID and the office to which the salesperson belongs is stored for each transaction. The data model also contains the current office for the salesperson. The current office of the salesperson and the office the salesperson was in when the transaction occurred must be visible. The current source table view of the model is shown. A data architect must resolve the synthetic key.

How should the data architect proceed?

Correct Answer & Rationale:

Answer: C

Explanation:

In the provided data model, both the CurrentOffice and Transaction tables contain the fields SalesID and Office. This leads to the creation of a synthetic key in Qlik Sense because of the two common fields between the two tables. A synthetic key is created automatically by Qlik Sense when two or more tables have two or more fields in common. While synthetic keys can be useful in some scenarios, they often lead to unwanted and unexpected results, so it’s generally advisable to resolve them.

In this case, the goal is to have both the current office of the salesperson and the office where the transaction occurred visible in the data model. Here’s how each option compares:

Option A: Comment out the Office in the Transaction table: This would remove the Office field from the Transaction table, which would prevent you from seeing which office the salesperson was in when the transaction occurred. This option does not meet the requirement.

Option B: Inner Join the Transaction table to the CurrentOffice table: Performing an inner join would merge the two tables based on the common SalesID and Office fields. However, this might result in a loss of data if there are sales records in the Transaction table that don’t have a corresponding record in the CurrentOffice table or vice versa. This approach might also lead to unexpected results in your analysis.

Option C: Alias Office to CurrentOffice In the CurrentOffice table: By renaming the Office field in the CurrentOffice table to CurrentOffice, you prevent the synthetic key from being created. This allows you to differentiate between the salesperson’s current office and the office where the transaction occurred. This approach maintains the integrity of your data and allows for clear analysis.

Option D: Force concatenation between the tables: Forcing concatenation would combine the rows of both tables into a single table. This would not solve the issue of distinguishing between the current office and the office at the time of the transaction, and it could lead to incorrect data associations.

Given these considerations, the best approach to resolve the synthetic key while fulfilling the requirement of having both the current office and the office at the time of the transaction visible is to Alias Office to CurrentOffice in the CurrentOffice table . This ensures that the data model will accurately represent both pieces of information without causing synthetic key issues.

QUESTION DESCRIPTION:

Sales managers need to see an overview of historical performance and highlight the current year's metrics. The app has the following requirements:

• Display the current year's total sales

• Total sales displayed must respond to the user's selections

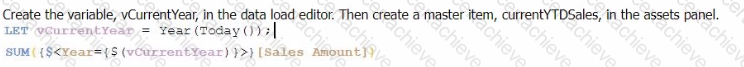

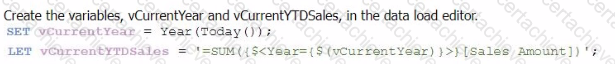

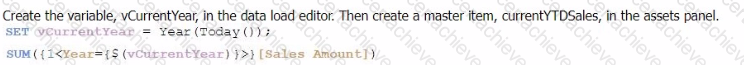

Which variables should a data architect create to meet these requirements?

A)

B)

C)

D)

Correct Answer & Rationale:

Answer: C

Explanation:

To meet the requirements of displaying the current year's total sales in a way that responds to user selections, the correct approach involves using both SET and LET statements to define the necessary variables in the data load editor.

Explanation of Option C:

SET vCurrentYear = Year(Today());

The SET statement is used here to assign the current year to the variable vCurrentYear. The SET statement treats the variable as a text string without evaluation. This is appropriate for a variable that will be used as part of an expression, ensuring the correct year is dynamically set based on the current date.

LET vCurrentYTDSales = '=SUM({$ <</b> Year={'$(vCurrentYear)'} > } [Sales Amount])';

The LET statement is used here to assign an evaluated expression to the variable vCurrentYTDSales. This expression calculates the Year-to-Date (YTD) sales for the current year by filtering the Year field to match vCurrentYear. The LET statement ensures that the expression inside the variable is evaluated, meaning that when vCurrentYTDSales is called in a chart or KPI, it dynamically calculates the YTD sales based on the current year and any user selections.

Key Points:

Dynamic Year Calculation : Year(Today()) dynamically calculates the current year every time the script runs.

Responsive to Selections : The set analysis syntax {$ < Year={'$(vCurrentYear)'} > } ensures that the sales totals respond to user selections while still focusing on the current year's data.

Appropriate Use of SET and LET : The combination of SET for storing the year and LET for storing the evaluated sum expression ensures that the variables are used effectively in the application.

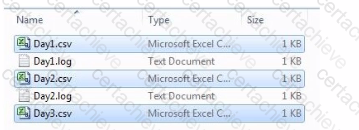

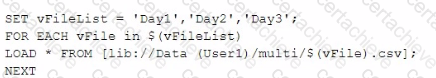

QUESTION DESCRIPTION:

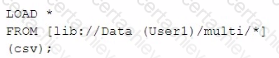

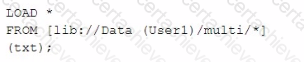

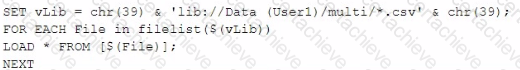

Refer to the exhibit.

A system creates log files and csv files daily and places these files in a folder. The log files are named automatically by the source system and change regularly. All csv files must be loaded into Qlik Sense for analysis.

Which method should be used to meet the requirements?

A)

B)

C)

D)

Correct Answer & Rationale:

Answer: B

Explanation:

In the scenario described, the goal is to load all CSV files from a directory into Qlik Sense, while ignoring the log files that are also present in the same directory. The correct approach should allow for dynamic file loading without needing to manually specify each file name, especially since the log files change regularly.

Here’s why Option B is the correct choice:

Option A: This method involves manually specifying a list of files (Day1, Day2, Day3) and then iterating through them to load each one. While this method would work, it requires knowing the exact file names in advance, which is not practical given that new files are added regularly. Also, it doesn’t handle dynamic file name changes or new files added to the folder automatically.

Option B: This approach uses a wildcard (*) in the file path, which tells Qlik Sense to load all files matching the pattern (in this case, all CSV files in the directory). Since the csv file extension is explicitly specified, only the CSV files will be loaded, and the log files will be ignored. This method is efficient and handles the dynamic nature of the file names without needing manual updates to the script.

Option C: This option is similar to Option B but targets text files (txt) instead of CSV files. Since the requirement is to load CSV files, this option would not meet the needs.

Option D: This option uses a more complex approach with filelist() and a loop, which could work, but it’s more complex than necessary. Option B achieves the same result more simply and directly.

Therefore, Option B is the most efficient and straightforward solution, dynamically loading all CSV files from the specified directory while ignoring the log files, as required.

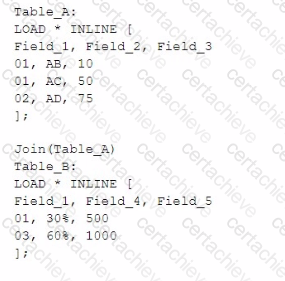

QUESTION DESCRIPTION:

A data architect executes the following script:

What will be the result of Table.A?

A)

B)

C)

D)

Correct Answer & Rationale:

Answer: D

Explanation:

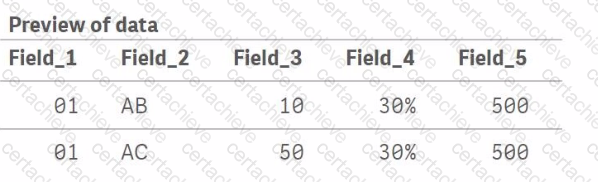

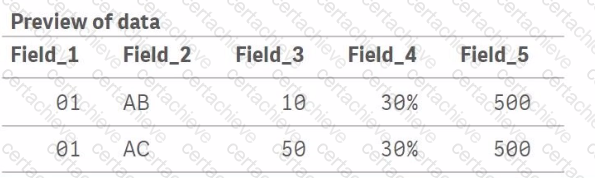

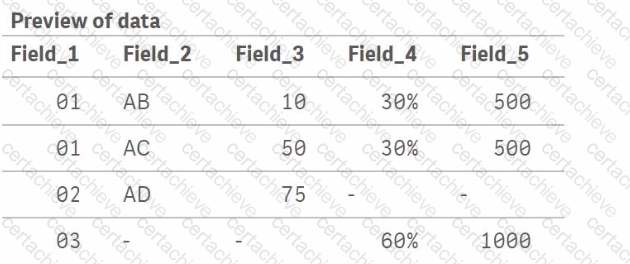

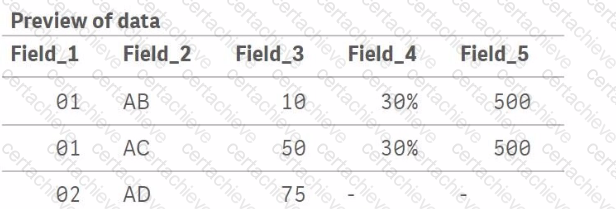

In the script provided, there are two tables being loaded inline: Table_A and Table_B. The script uses the Join function to combine Table_B with Table_A based on the common field Field_1. Here’s how the join operation works:

Table_A initially contains three records with Field_1 values of 01, 01, and 02.

Table_B contains two records with Field_1 values of 01 and 03.

When Join(Table_A) is executed, Qlik Sense will perform an inner join by default, meaning it will join rows from Table_B to Table_A where Field_1 matches in both tables. The result is:

For Field_1 = 01, there are two matches in Table_A and one match in Table_B. This results in two records in the joined table where Field_4 and Field_5 values from Table_B are repeated for each match in Table_A.

For Field_1 = 02, there is no corresponding Field_1 = 02 in Table_B, so the Field_4 and Field_5 values for this record will be null.

For Field_1 = 03, there is no corresponding Field_1 = 03 in Table_A, so the record from Table_B with Field_1 = 03 is not included in the final joined table.

Thus, the correct output will look like this:

Field_1 = 01, Field_2 = AB, Field_3 = 10, Field_4 = 30%, Field_5 = 500

Field_1 = 01, Field_2 = AC, Field_3 = 50, Field_4 = 30%, Field_5 = 500

Field_1 = 02, Field_2 = AD, Field_3 = 75, Field_4 = null, Field_5 = null

QUESTION DESCRIPTION:

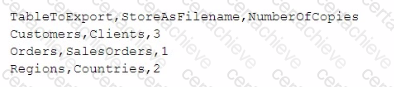

A data architect needs to develop a script to export tables from a model based upon rules from an independent file. The structure of the text file with the export rules is as follows:

These rules govern which table in the model to export, what the target root filename should be, and the number of copies to export.

The TableToExport values are already verified to exist in the model.

In addition, the format will always be QVD, and the copies will be incrementally numbered.

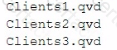

For example, the Customers table would be exported as:

What is the minimum set of scripting strategies the data architect must use?

Correct Answer & Rationale:

Answer: A

Explanation:

In the provided scenario, the goal is to export tables from a Qlik Sense model based on rules specified in an external text file. The structure of the text file indicates which table to export, the filename to use, and how many copies to create.

Given this structure, the data architect needs to:

Loop through each row in the text file to process each table.

Use an IF statement to check whether the specified table exists in the model (though it's mentioned they are verified to exist, this step may involve conditional logic to ensure the rules are correctly followed).

Use another IF statement to handle the creation of multiple copies, ensuring each file is named incrementally (e.g., Clients1.qvd, Clients2.qvd, etc.).

Key Script Strategies:

Loop : A loop is necessary to iterate through each row of the text file to process the tables specified for export.

IF Statements : The first IF statement checks conditions such as whether the table should be exported (based on additional logic if needed). The second IF statement handles the creation of multiple copies by incrementing the filename.

This approach covers all the necessary logic with the minimum set of scripting strategies, ensuring that each table is exported according to the rules defined.

QUESTION DESCRIPTION:

A startup company is about have its Initial Public Offering (IPO) on the New York Stock Exchange.

This startup company has used Qlik Sense for many years for data-based decision making for Sales and Marketing efforts, as well as for input into Financial Reporting. The startup's Qlik Sense applications use variables that have different values at different points in time.

Due to the increased rigor required in record keeping for public companies, these variables must be clearly recorded in the script reload logs of the Qlik Sense applications. These logs are refreshed daily.

The data architect wants to have the variables names, with their current values, written into the script reload logs. Which script statement should the data architect use?

Correct Answer & Rationale:

Answer: B

Explanation:

In the scenario where the startup company is preparing for an IPO, there is an increased need for meticulous record-keeping, including the recording of variable values used in Qlik Sense applications. The TRACE statement is the most suitable option for logging variable values during script execution.

TRACE : This statement writes custom messages, including variable values, to the script execution log. By using TRACE, you can ensure that every reload log contains the names and current values of all relevant variables, providing the necessary transparency and traceability.

For example, the script could include:

TRACE $(VariableName);

This command will output the variable's value in the script log, ensuring it is recorded for audit purposes.

QUESTION DESCRIPTION:

A Chief Information Officer has hired Qlik to enhance the organization's inventory analytics. In the initial meeting, the client's focus was determined to be forecasting inventory levels.

Which stakeholder should be consulted first when gathering requirements?

Correct Answer & Rationale:

Answer: A

Explanation:

In this scenario, the focus of the project is to enhance inventory analytics, specifically targeting forecasting inventory levels. The primary goal is to understand the factors influencing inventory management and to build a model that helps in predicting future inventory needs.

Option A: Product Buyer is the correct stakeholder to consult first.

Here’s why:

Direct Involvement in Inventory Management :

The Product Buyer is typically responsible for making decisions related to purchasing and maintaining inventory levels. They have a deep understanding of the factors that influence inventory needs, such as lead times, supplier reliability, demand forecasting, and purchasing cycles.

Knowledge of Inventory Requirements :

Since the project’s primary focus is forecasting inventory levels, the Product Buyer will provide crucial insights into the variables that affect inventory and the data needed for accurate forecasting. They can guide what historical data is essential and what external factors might need to be considered in the forecasting model.

Alignment with Business Objectives :

By consulting the Product Buyer, the project can ensure that the inventory forecasting models align with the company’s inventory management objectives, avoiding overstocking or understocking, and thus optimizing costs.

References :

Qlik Project Management Best Practices : In analytics projects, particularly those focused on specific operational areas like inventory management, consulting the stakeholders who are closest to the operational data and decision-making processes ensures that the solution will be relevant and effective.

QUESTION DESCRIPTION:

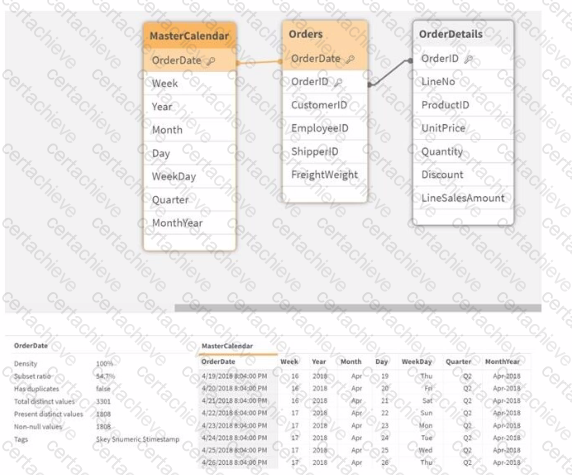

Exhibit.

Refer to the exhibit.

A business analyst informs the data architect that not all analysis types over time show the expected data.

Instead they show very little data, if any.

Which Qlik script function should be used to resolve the issue in the data model?

Correct Answer & Rationale:

Answer: D

Explanation:

In the provided data model, there is an issue where certain types of analysis over time are not showing the expected data. This problem is often caused by a mismatch in the data formats of the OrderDate field between the Orders and MasterCalendar tables.

Option A: DatefFloor(OrderDate)) would round down to the nearest date boundary, which might not address the root cause if the issue is related to different date and time formats.

Option B: TimeStamp#(OrderDate, 'M/D/YYYY hh.mm.ff') ensures that the date is interpreted correctly as a timestamp, but this does not resolve potential mismatches in date format directly.

Option C: TimeStamp(OrderDate) will keep both date and time, which may still cause mismatches if the MasterCalendar is dealing purely with dates.

Option D: Date(OrderDate) formats the OrderDate to show only the date portion (removing the time part). This function will ensure that the date values are consistent across the Orders and MasterCalendar tables by converting the timestamps to just dates. This is the most straightforward and effective way to ensure consistency in date-based analysis.

In Qlik Sense, dates and timestamps are stored as dual values (both text and numeric), and mismatches can lead to incomplete or incorrect analyses. By using Date(OrderDate) in both the Orders and MasterCalendar tables, you ensure that the analysis will have consistent date values, resolving the issue described.

QUESTION DESCRIPTION:

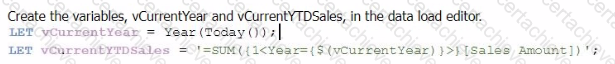

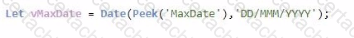

A data architect wants reflect a value of the variable in the script log for tracking purposes. The variable is defined as:

Which statement should be used to track the variable's value?

A)

B)

C)

D)

Correct Answer & Rationale:

Answer: B

Explanation:

In Qlik Sense, the TRACE statement is used to print custom messages to the script execution log. To output the value of a variable, particularly one that is dynamically assigned, the correct syntax must be used to ensure that the variable's value is evaluated and displayed correctly.

The variable vMaxDate is defined with the LET statement, which means it is evaluated immediately, and its value is stored.

When using the TRACE statement, to output the value of vMaxDate, you need to ensure the variable's value is expanded before being printed. This is done using the $() expansion syntax.

The correct syntax is TRACE #### $(vMaxDate) ####; which evaluates the variable vMaxDate and inserts its value into the log output.

Key Qlik Sense Data Architect References:

Variable Expansion: In Qlik Sense scripting, $(variable_name) is used to expand and insert the value of the variable into expressions or statements. This is crucial when you want to output or use the value stored in a variable.

TRACE Statement: The TRACE command is used to write messages to the script log. It is commonly used for debugging purposes to track the flow of script execution or to verify the values of variables during script execution.

A Stepping Stone for Enhanced Career Opportunities

Your profile having Qlik Sense Data Architect certification significantly enhances your credibility and marketability in all corners of the world. The best part is that your formal recognition pays you in terms of tangible career advancement. It helps you perform your desired job roles accompanied by a substantial increase in your regular income. Beyond the resume, your expertise imparts you confidence to act as a dependable professional to solve real-world business challenges.

Your success in Qlik QSDA2024 certification exam makes your visible and relevant in the fast-evolving tech landscape. It proves a lifelong investment in your career that give you not only a competitive advantage over your non-certified peers but also makes you eligible for a further relevant exams in your domain.

What You Need to Ace Qlik Exam QSDA2024

Achieving success in the QSDA2024 Qlik exam requires a blending of clear understanding of all the exam topics, practical skills, and practice of the actual format. There's no room for cramming information, memorizing facts or dependence on a few significant exam topics. It means your readiness for exam needs you develop a comprehensive grasp on the syllabus that includes theoretical as well as practical command.

Here is a comprehensive strategy layout to secure peak performance in QSDA2024 certification exam:

- Develop a rock-solid theoretical clarity of the exam topics

- Begin with easier and more familiar topics of the exam syllabus

- Make sure your command on the fundamental concepts

- Focus your attention to understand why that matters

- Ensure hands-on practice as the exam tests your ability to apply knowledge

- Develop a study routine managing time because it can be a major time-sink if you are slow

- Find out a comprehensive and streamlined study resource for your help

Ensuring Outstanding Results in Exam QSDA2024!

In the backdrop of the above prep strategy for QSDA2024 Qlik exam, your primary need is to find out a comprehensive study resource. It could otherwise be a daunting task to achieve exam success. The most important factor that must be kep in mind is make sure your reliance on a one particular resource instead of depending on multiple sources. It should be an all-inclusive resource that ensures conceptual explanations, hands-on practical exercises, and realistic assessment tools.

Certachieve: A Reliable All-inclusive Study Resource

Certachieve offers multiple study tools to do thorough and rewarding QSDA2024 exam prep. Here's an overview of Certachieve's toolkit:

Qlik QSDA2024 PDF Study Guide

This premium guide contains a number of Qlik QSDA2024 exam questions and answers that give you a full coverage of the exam syllabus in easy language. The information provided efficiently guides the candidate's focus to the most critical topics. The supportive explanations and examples build both the knowledge and the practical confidence of the exam candidates required to confidently pass the exam. The demo of Qlik QSDA2024 study guide pdf free download is also available to examine the contents and quality of the study material.

Qlik QSDA2024 Practice Exams

Practicing the exam QSDA2024 questions is one of the essential requirements of your exam preparation. To help you with this important task, Certachieve introduces Qlik QSDA2024 Testing Engine to simulate multiple real exam-like tests. They are of enormous value for developing your grasp and understanding your strengths and weaknesses in exam preparation and make up deficiencies in time.

These comprehensive materials are engineered to streamline your preparation process, providing a direct and efficient path to mastering the exam's requirements.

Qlik QSDA2024 exam dumps

These realistic dumps include the most significant questions that may be the part of your upcoming exam. Learning QSDA2024 exam dumps can increase not only your chances of success but can also award you an outstanding score.

Qlik QSDA2024 Qlik Sense Data Architect FAQ

There are only a formal set of prerequisites to take the QSDA2024 Qlik exam. It depends of the Qlik organization to introduce changes in the basic eligibility criteria to take the exam. Generally, your thorough theoretical knowledge and hands-on practice of the syllabus topics make you eligible to opt for the exam.

It requires a comprehensive study plan that includes exam preparation from an authentic, reliable and exam-oriented study resource. It should provide you Qlik QSDA2024 exam questions focusing on mastering core topics. This resource should also have extensive hands on practice using Qlik QSDA2024 Testing Engine.

Finally, it should also introduce you to the expected questions with the help of Qlik QSDA2024 exam dumps to enhance your readiness for the exam.

Like any other Qlik Certification exam, the Qlik Sense Data Architect is a tough and challenging. Particularly, it's extensive syllabus makes it hard to do QSDA2024 exam prep. The actual exam requires the candidates to develop in-depth knowledge of all syllabus content along with practical knowledge. The only solution to pass the exam on first try is to make sure diligent study and lab practice prior to take the exam.

The QSDA2024 Qlik exam usually comprises 100 to 120 questions. However, the number of questions may vary. The reason is the format of the exam that may include unscored and experimental questions sometimes. Mostly, the actual exam consists of various question formats, including multiple-choice, simulations, and drag-and-drop.

It actually depends on one's personal keenness and absorption level. However, usually people take three to six weeks to thoroughly complete the Qlik QSDA2024 exam prep subject to their prior experience and the engagement with study. The prime factor is the observation of consistency in studies and this factor may reduce the total time duration.

Yes. Qlik has transitioned to v1.1, which places more weight on Network Automation, Security Fundamentals, and AI integration. Our 2026 bank reflects these specific updates.

Standard dumps rely on pattern recognition. If Qlik changes a single IP address in a topology, memorized answers fail. Our rationales teach you the logic so you can solve the problem regardless of the phrasing.

Top Exams & Certification Providers

New & Trending

- New Released Exams

- Related Exam

- Hot Vendor