The MuleSoft Certified Platform Architect - Level 1 (MCPA-Level-1)

Passing MuleSoft MuleSoft Certified Platform Architect exam ensures for the successful candidate a powerful array of professional and personal benefits. The first and the foremost benefit comes with a global recognition that validates your knowledge and skills, making possible your entry into any organization of your choice.

Why CertAchieve is Better than Standard MCPA-Level-1 Dumps

In 2026, MuleSoft uses variable topologies. Basic dumps will fail you.

| Quality Standard | Generic Dump Sites | CertAchieve Premium Prep |

|---|---|---|

| Technical Explanation | None (Answer Key Only) | Step-by-Step Expert Rationales |

| Syllabus Coverage | Often Outdated (v1.0) | 2026 Updated (Latest Syllabus) |

| Scenario Mastery | Blind Memorization | Conceptual Logic & Troubleshooting |

| Instructor Access | No Post-Sale Support | 24/7 Professional Help |

Success backed by proven exam prep tools

Real exam match rate reported by verified users

Consistently high performance across certifications

Efficient prep that reduces study hours significantly

MuleSoft MCPA-Level-1 Exam Domains Q&A

Certified instructors verify every question for 100% accuracy, providing detailed, step-by-step explanations for each.

QUESTION DESCRIPTION:

An organization wants MuleSoft-hosted runtime plane features (such as HTTP load balancing, zero downtime, and horizontal and vertical scaling) in its Azure environment. What runtime plane minimizes the organization ' s effort to achieve these features?

Correct Answer & Rationale:

Answer: A

Explanation:

Explanation

Correct Answer: Anypoint Runtime Fabric

*****************************************

> > When a customer is already having an Azure environment, It is not at all an ideal approach to go with hybrid model having some Mule Runtimes hosted on Azure and some on MuleSoft. This is unnecessary and useless.

> > CloudHub is a Mulesoft-hosted Runtime plane and is on AWS. We cannot customize to point CloudHub to customer ' s Azure environment.

> > Anypoint Platform for Pivotal Cloud Foundry is specifically for infrastructure provided by Pivotal Cloud Foundry

> > Anypoint Runtime Fabric is right answer as it is a container service that automates the deployment and orchestration of Mule applications and API gateways. Runtime Fabric runs within a customer-managed infrastructure on AWS, Azure, virtual machines (VMs), and bare-metal servers.

-Some of the capabilities of Anypoint Runtime Fabric include:

-Isolation between applications by running a separate Mule runtime per application.

-Ability to run multiple versions of Mule runtime on the same set of resources.

-Scaling applications across multiple replicas.

-Automated application fail-over.

-Application management with Anypoint Runtime Manager.

QUESTION DESCRIPTION:

A Platinum customer uses the U.S. control plane and deploys applications to CloudHub in Singapore with a default log configuration.

The compliance officer asks where the logs and monitoring data reside?

Correct Answer & Rationale:

Answer: B

Explanation:

For applications deployed on CloudHub in a foreign region (e.g., Singapore), MuleSoft handles log and monitoring data in the region where the control plane resides. This data storage policy is standard for CloudHub deployments to maintain centralized log and monitoring data.

Data Location :

For a U.S.-based control plane , all logs and monitoring data are stored in the United States, regardless of the deployment region.

Although the application itself runs in Singapore, data related to application performance and logs is not localized to the deployment region.

Explanation of Correct Answer (B) :

Since the control plane is based in the United States, all operational data like logs and monitoring will also be stored there, ensuring compliance with MuleSoft’s data handling policies.

Explanation of Incorrect Options :

Option A and D are incorrect because MuleSoft does not store logs or monitoring data in the application deployment region when the control plane is located in the United States.

Option C suggests mixed storage, which does not align with MuleSoft’s data policy structure.

References For details on data residency in CloudHub deployments, refer to MuleSoft’s documentation on CloudHub control planes and data handling policies .

QUESTION DESCRIPTION:

A Mule application exposes an HTTPS endpoint and is deployed to the CloudHub Shared Worker Cloud. All traffic to that Mule application must stay inside the AWS VPC.

To what TCP port do API invocations to that Mule application need to be sent?

Correct Answer & Rationale:

Answer: D

Explanation:

Explanation

Correct Answer: 8082

*****************************************

> > 8091 and 8092 ports are to be used when keeping your HTTP and HTTPS app private to the LOCAL VPC respectively.

> > Above TWO ports are not for Shared AWS VPC/ Shared Worker Cloud.

> > 8081 is to be used when exposing your HTTP endpoint app to the internet through Shared LB

> > 8082 is to be used when exposing your HTTPS endpoint app to the internet through Shared LB

So, API invocations should be sent to port 8082 when calling this HTTPS based app.

References:

https://docs.mulesoft.com/runtime-manager/cloudhub-networking-guide

https://help.mulesoft.com/s/article/Configure-Cloudhub-Application-to-Send-a-HTTPS-Request-Directly-to-Another-Cloudhub-Application

https://help.mulesoft.com/s/question/0D52T00004mXXULSA4/multiple-http-listerners-on-cloudhub-one-with-port-9090

QUESTION DESCRIPTION:

An organization is implementing a Quote of the Day API that caches today ' s quote.

What scenario can use the GoudHub Object Store via the Object Store connector to persist the cache ' s state?

Correct Answer & Rationale:

Answer: D

Explanation:

Explanation

Correct Answer: When there is one CloudHub deployment of the API implementation to three CloudHub workers that must share the cache state.

*****************************************

Key details in the scenario:

> > Use the CloudHub Object Store via the Object Store connector

Considering above details:

> > CloudHub Object Stores have one-to-one relationship with CloudHub Mule Applications.

> > We CANNOT use an application ' s CloudHub Object Store to be shared among multiple Mule applications running in different Regions or Business Groups or Customer-hosted Mule Runtimes by using Object Store connector.

> > If it is really necessary and very badly needed, then Anypoint Platform supports a way by allowing access to CloudHub Object Store of another application using Object Store REST API. But NOT using Object Store connector.

So, the only scenario where we can use the CloudHub Object Store via the Object Store connector to persist the cache’s state is when there is one CloudHub deployment of the API implementation to multiple CloudHub workers that must share the cache state.

QUESTION DESCRIPTION:

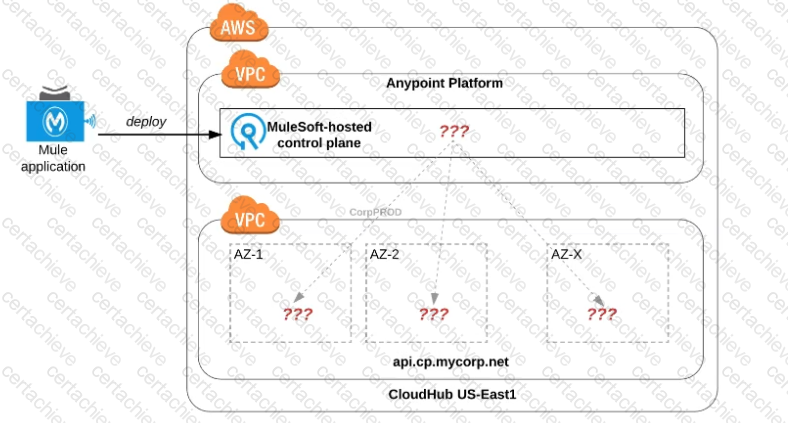

Refer to the exhibit.

An organization uses one specific CloudHub (AWS) region for all CloudHub deployments.

How are CloudHub workers assigned to availability zones (AZs) when the organization ' s Mule applications are deployed to CloudHub in that region?

Correct Answer & Rationale:

Answer: D

Explanation:

Explanation

Correct Answer : Workers are randomly distributed across available AZs within that region.

*****************************************

> > Currently, we only have control to choose which AWS Region to choose but there is no control at all using any configurations or deployment options to decide what Availability Zone (AZ) to assign to what worker.

> > There are NO fixed or implicit rules on platform too w.r.t assignment of AZ to workers based on environment or application.

> > They are completely assigned in random . However, cloudhub definitely ensures that HA is achieved by assigning the workers to more than on AZ so that all workers are not assigned to same AZ for same application.

QUESTION DESCRIPTION:

An Anypoint Platform organization has been configured with an external identity provider (IdP) for identity management and client management. What credentials or token must be provided to Anypoint CLI to execute commands against the Anypoint Platform APIs?

Correct Answer & Rationale:

Answer: A

Explanation:

Explanation

Correct Answer: The credentials provided by the IdP for identity management

*****************************************

QUESTION DESCRIPTION:

An online store ' s marketing team has noticed an increase in customers leaving online baskets without checking out. They suspect a technology issue is at the root cause of the baskets being left behind. They approach the Center for Enablement to ask for help identifying the issue. Multiple APIs from across all the layers of their application network are involved in the shopping application.

Which feature of the Anypoint Platform can be used to view metrics from all involved APIs at the same time?

Correct Answer & Rationale:

Answer: B

Explanation:

Understanding the Need for Cross-API Monitoring :

The Center for Enablement (C4E) needs to investigate potential technical issues across multiple APIs in the application network that may be causing customers to abandon their carts.

This requires a solution that allows viewing metrics across several APIs in real-time to identify any performance issues or bottlenecks.

Evaluating Anypoint Platform Features :

Built-in Dashboards : Anypoint Platform provides built-in dashboards in Anypoint Monitoring, allowing teams to view metrics from multiple APIs in a single interface. This feature is designed to monitor API performance, latency, errors, and throughput, and is ideal for tracking performance across all layers of the application network.

Custom Dashboards : While custom dashboards allow for more tailored views, the built-in dashboards already aggregate metrics for multiple APIs, making it unnecessary to build a custom solution for this scenario.

Functional Monitoring : This feature is used to set up tests to monitor specific API functionality and uptime but is not suited for tracking metrics across multiple APIs in real-time.

API Manager : API Manager primarily focuses on managing API policies, contracts, and access control rather than providing detailed, real-time metrics across the entire application network.

Conclusion :

Option B (Built-in dashboards) is the best choice because it provides a comprehensive view of metrics from all APIs involved, enabling the C4E team to quickly identify any issues that may be contributing to abandoned shopping carts.

Refer to MuleSoft’s documentation on Anypoint Monitoring and built-in dashboards for more details on configuring and using these dashboards effectively.

QUESTION DESCRIPTION:

What is a typical result of using a fine-grained rather than a coarse-grained API deployment model to implement a given business process?

Correct Answer & Rationale:

Answer: B

Explanation:

Explanation

Correct Answer: A higher number of discoverable API-related assets in the application network.

*****************************************

> > We do NOT get faster response times in fine-grained approach when compared to coarse-grained approach.

> > In fact, we get faster response times from a network having coarse-grained APIs compared to a network having fine-grained APIs model. The reasons are below.

Fine-grained approach:

1. will have more APIs compared to coarse-grained

2. So, more orchestration needs to be done to achieve a functionality in business process.

3. Which means, lots of API calls to be made. So, more connections will needs to be established. So, obviously more hops, more network i/o, more number of integration points compared to coarse-grained approach where fewer APIs with bulk functionality embedded in them.

4. That is why, because of all these extra hops and added latencies, fine-grained approach will have bit more response times compared to coarse-grained.

5. Not only added latencies and connections, there will be more resources used up in fine-grained approach due to more number of APIs.

That ' s why, fine-grained APIs are good in a way to expose more number of resuable assets in your network and make them discoverable. However, needs more maintenance, taking care of integration points, connections, resources with a little compromise w.r.t network hops and response times.

QUESTION DESCRIPTION:

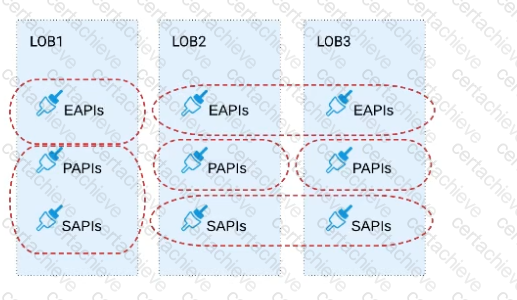

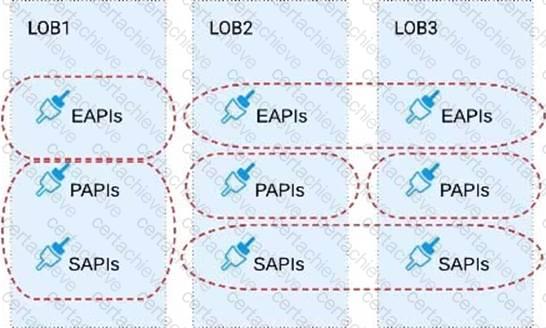

Refer to the exhibit.

Three business processes need to be implemented, and the implementations need to communicate with several different SaaS applications.

These processes are owned by separate (siloed) LOBs and are mainly independent of each other, but do share a few business entities. Each LOB has one development team and their own budget

In this organizational context, what is the most effective approach to choose the API data models for the APIs that will implement these business processes with minimal redundancy of the data models?

A) Build several Bounded Context Data Models that align with coherent parts of the business processes and the definitions of associated business entities

B) Build distinct data models for each API to follow established micro-services and Agile API-centric practices

C) Build all API data models using XML schema to drive consistency and reuse across the organization

D) Build one centralized Canonical Data Model (Enterprise Data Model) that unifies all the data types from all three business processes, ensuring the data model is consistent and non-redundant

Correct Answer & Rationale:

Answer: A

Explanation:

Explanation

Correct Answer : Build several Bounded Context Data Models that align with coherent parts of the business processes and the definitions of associated business entities .

*****************************************

> > The options w.r.t building API data models using XML schema/ Agile API-centric practices are irrelevant to the scenario given in the question. So these two are INVALID.

> > Building EDM (Enterprise Data Model) is not feasible or right fit for this scenario as the teams and LOBs work in silo and they all have different initiatives, budget etc.. Building EDM needs intensive coordination among all the team which evidently seems not possible in this scenario.

So, the right fit for this scenario is to build several Bounded Context Data Models that align with coherent parts of the business processes and the definitions of associated business entities.

QUESTION DESCRIPTION:

The asset version 2.0.0 of the Order API is successfully published in Exchange and configured in API Manager with the Autodiscovery API ID correctly linked to the

API implementation, A new GET method is added to the existing API specification, and after updates, the asset version of the Order API is 2.0.1,

What happens to the Autodiscovery API ID when the new asset version is updated in API Manager?

Correct Answer & Rationale:

Answer: C

Explanation:

Understanding API Autodiscovery in MuleSoft :

API Autodiscovery links an API implementation in Anypoint Platform with its configuration in API Manager. This is controlled by the API ID which is set in the API Autodiscovery element in the Mule application.

The API ID remains consistent across minor updates to the API asset version in Exchange (e.g., from 2.0.0 to 2.0.1) as long as it is the same API.

Effect of Asset Version Update on API Autodiscovery :

When the asset version is updated (e.g., from 2.0.0 to 2.0.1), the API ID remains the same . Therefore, no changes are needed in the Autodiscovery configuration within the Mule application. The Autodiscovery will continue to link the API implementation to the latest version in API Manager.

Evaluating the Options :

Option A : Incorrect, as the API ID does not automatically change with minor asset version updates.

Option B : Incorrect, as the API ID remains the same, so no update is needed in the API implementation.

Option C (Correct Answer) : The API ID does not change , so no changes are necessary in the API implementation for the new asset version.

Option D : Incorrect, as there is no need to update the API implementation in the Autodiscovery global element for minor version changes.

Conclusion :

Option C is the correct answer, as the API ID remains unchanged with minor version updates, and no changes are needed in the API Autodiscovery configuration.

Refer to MuleSoft documentation on API Autodiscovery and version management for more details.

A Stepping Stone for Enhanced Career Opportunities

Your profile having MuleSoft Certified Platform Architect certification significantly enhances your credibility and marketability in all corners of the world. The best part is that your formal recognition pays you in terms of tangible career advancement. It helps you perform your desired job roles accompanied by a substantial increase in your regular income. Beyond the resume, your expertise imparts you confidence to act as a dependable professional to solve real-world business challenges.

Your success in MuleSoft MCPA-Level-1 certification exam makes your visible and relevant in the fast-evolving tech landscape. It proves a lifelong investment in your career that give you not only a competitive advantage over your non-certified peers but also makes you eligible for a further relevant exams in your domain.

What You Need to Ace MuleSoft Exam MCPA-Level-1

Achieving success in the MCPA-Level-1 MuleSoft exam requires a blending of clear understanding of all the exam topics, practical skills, and practice of the actual format. There's no room for cramming information, memorizing facts or dependence on a few significant exam topics. It means your readiness for exam needs you develop a comprehensive grasp on the syllabus that includes theoretical as well as practical command.

Here is a comprehensive strategy layout to secure peak performance in MCPA-Level-1 certification exam:

- Develop a rock-solid theoretical clarity of the exam topics

- Begin with easier and more familiar topics of the exam syllabus

- Make sure your command on the fundamental concepts

- Focus your attention to understand why that matters

- Ensure hands-on practice as the exam tests your ability to apply knowledge

- Develop a study routine managing time because it can be a major time-sink if you are slow

- Find out a comprehensive and streamlined study resource for your help

Ensuring Outstanding Results in Exam MCPA-Level-1!

In the backdrop of the above prep strategy for MCPA-Level-1 MuleSoft exam, your primary need is to find out a comprehensive study resource. It could otherwise be a daunting task to achieve exam success. The most important factor that must be kep in mind is make sure your reliance on a one particular resource instead of depending on multiple sources. It should be an all-inclusive resource that ensures conceptual explanations, hands-on practical exercises, and realistic assessment tools.

Certachieve: A Reliable All-inclusive Study Resource

Certachieve offers multiple study tools to do thorough and rewarding MCPA-Level-1 exam prep. Here's an overview of Certachieve's toolkit:

MuleSoft MCPA-Level-1 PDF Study Guide

This premium guide contains a number of MuleSoft MCPA-Level-1 exam questions and answers that give you a full coverage of the exam syllabus in easy language. The information provided efficiently guides the candidate's focus to the most critical topics. The supportive explanations and examples build both the knowledge and the practical confidence of the exam candidates required to confidently pass the exam. The demo of MuleSoft MCPA-Level-1 study guide pdf free download is also available to examine the contents and quality of the study material.

MuleSoft MCPA-Level-1 Practice Exams

Practicing the exam MCPA-Level-1 questions is one of the essential requirements of your exam preparation. To help you with this important task, Certachieve introduces MuleSoft MCPA-Level-1 Testing Engine to simulate multiple real exam-like tests. They are of enormous value for developing your grasp and understanding your strengths and weaknesses in exam preparation and make up deficiencies in time.

These comprehensive materials are engineered to streamline your preparation process, providing a direct and efficient path to mastering the exam's requirements.

MuleSoft MCPA-Level-1 exam dumps

These realistic dumps include the most significant questions that may be the part of your upcoming exam. Learning MCPA-Level-1 exam dumps can increase not only your chances of success but can also award you an outstanding score.

MuleSoft MCPA-Level-1 MuleSoft Certified Platform Architect FAQ

There are only a formal set of prerequisites to take the MCPA-Level-1 MuleSoft exam. It depends of the MuleSoft organization to introduce changes in the basic eligibility criteria to take the exam. Generally, your thorough theoretical knowledge and hands-on practice of the syllabus topics make you eligible to opt for the exam.

It requires a comprehensive study plan that includes exam preparation from an authentic, reliable and exam-oriented study resource. It should provide you MuleSoft MCPA-Level-1 exam questions focusing on mastering core topics. This resource should also have extensive hands on practice using MuleSoft MCPA-Level-1 Testing Engine.

Finally, it should also introduce you to the expected questions with the help of MuleSoft MCPA-Level-1 exam dumps to enhance your readiness for the exam.

Like any other MuleSoft Certification exam, the MuleSoft Certified Platform Architect is a tough and challenging. Particularly, it's extensive syllabus makes it hard to do MCPA-Level-1 exam prep. The actual exam requires the candidates to develop in-depth knowledge of all syllabus content along with practical knowledge. The only solution to pass the exam on first try is to make sure diligent study and lab practice prior to take the exam.

The MCPA-Level-1 MuleSoft exam usually comprises 100 to 120 questions. However, the number of questions may vary. The reason is the format of the exam that may include unscored and experimental questions sometimes. Mostly, the actual exam consists of various question formats, including multiple-choice, simulations, and drag-and-drop.

It actually depends on one's personal keenness and absorption level. However, usually people take three to six weeks to thoroughly complete the MuleSoft MCPA-Level-1 exam prep subject to their prior experience and the engagement with study. The prime factor is the observation of consistency in studies and this factor may reduce the total time duration.

Yes. MuleSoft has transitioned to v1.1, which places more weight on Network Automation, Security Fundamentals, and AI integration. Our 2026 bank reflects these specific updates.

Standard dumps rely on pattern recognition. If MuleSoft changes a single IP address in a topology, memorized answers fail. Our rationales teach you the logic so you can solve the problem regardless of the phrasing.

Top Exams & Certification Providers

New & Trending

- New Released Exams

- Related Exam

- Hot Vendor