The Databricks Certified Data Engineer Professional Exam (Databricks-Certified-Professional-Data-Engineer)

Passing Databricks Databricks Certification exam ensures for the successful candidate a powerful array of professional and personal benefits. The first and the foremost benefit comes with a global recognition that validates your knowledge and skills, making possible your entry into any organization of your choice.

Why CertAchieve is Better than Standard Databricks-Certified-Professional-Data-Engineer Dumps

In 2026, Databricks uses variable topologies. Basic dumps will fail you.

| Quality Standard | Generic Dump Sites | CertAchieve Premium Prep |

|---|---|---|

| Technical Explanation | None (Answer Key Only) | Step-by-Step Expert Rationales |

| Syllabus Coverage | Often Outdated (v1.0) | 2026 Updated (Latest Syllabus) |

| Scenario Mastery | Blind Memorization | Conceptual Logic & Troubleshooting |

| Instructor Access | No Post-Sale Support | 24/7 Professional Help |

Success backed by proven exam prep tools

Real exam match rate reported by verified users

Consistently high performance across certifications

Efficient prep that reduces study hours significantly

Databricks Databricks-Certified-Professional-Data-Engineer Exam Domains Q&A

Certified instructors verify every question for 100% accuracy, providing detailed, step-by-step explanations for each.

QUESTION DESCRIPTION:

A query is taking too long to run. After investigating the Spark UI, the data engineer discovered a significant amount of disk spill . The compute instance being used has a core-to-memory ratio of 1:2.

What are the two steps the data engineer should take to minimize spillage? (Choose 2 answers)

Correct Answer & Rationale:

Answer: A, D

Explanation:

Databricks recommends addressing disk spilling —which occurs when Spark tasks run out of memory—by increasing memory per core and controlling partition size. Selecting an instance type with a higher memory-to-core ratio (A) provides each task with more available RAM, directly reducing the chance of spilling to disk. Additionally, reducing spark.sql.files.maxPartitionBytes (D) creates smaller partitions, preventing any single task from holding too much data in memory. Increasing partition size (C) or disk capacity (B) does not solve memory bottlenecks, and bandwidth (E) affects network I/O, not spill behavior. Therefore, the correct actions are A and D .

QUESTION DESCRIPTION:

Two data engineers are working on the same Databricks notebook in separate branches. Both have edited the same section of code. When one tries to merge the other’s branch into their own using the Databricks Git folders UI, a merge conflict occurs on that notebook file. The UI highlights the conflict and presents options for resolution.

How should the data engineers resolve this merge conflict using Databricks Git folders?

Correct Answer & Rationale:

Answer: D

Explanation:

In the Databricks Git folders integration, when merge conflicts arise in notebooks, the UI provides a visual diff editor that highlights conflicting code segments. Users can manually choose which changes to keep from each branch, edit directly in the notebook UI, and remove conflict markers.

After resolving, the engineer must mark the conflict as resolved, save, and commit the final version.

This process ensures that both contributors’ valid code segments are merged correctly and version history is maintained.

Forcing a push (C) or deleting notebooks (B) introduces data loss or versioning issues. Aborting without review (A) violates collaborative best practices. Therefore, D is the only correct and Databricks-approved way to resolve notebook merge conflicts.

QUESTION DESCRIPTION:

A data engineer is tasked with ensuring that a Delta table in Databricks continuously retains deleted files for 15 days (instead of the default 7 days), in order to permanently comply with the organization’s data retention policy.

Which code snippet correctly sets this retention period for deleted files?

Correct Answer & Rationale:

Answer: A

Explanation:

In Delta Lake , the property delta.deletedFileRetentionDuration controls how long deleted data files are retained before being permanently removed during a VACUUM operation.

By default, this retention duration is set to 7 days .

To comply with stricter retention requirements, organizations can explicitly update the table property using an ALTER TABLE statement.

Option A uses the correct SQL command:

ALTER TABLE my_table SET TBLPROPERTIES ( ' delta.deletedFileRetentionDuration ' = ' interval 15 days ' )

This updates the Delta table metadata so that all future operations respect the 15-day retention policy for deleted files.

Why not the others?

B : This code incorrectly tries to set the property via the DeltaTable API. Delta’s Python API does not expose direct attributes like deletedFileRetentionDuration; instead, properties must be set through ALTER TABLE or DataFrameWriter options.

C : VACUUM ... RETAIN specifies a one-time file cleanup action (e.g., retaining 15 hours of history), not a persistent retention policy. It cannot be used to set a continuous retention duration .

D : Setting spark.conf applies a session-level configuration and does not permanently update the table’s retention metadata. Once the session ends, this configuration is lost.

Therefore, Option A is the correct and documented approach for persistently enforcing a 15-day deleted file retention period in Delta Lake.

QUESTION DESCRIPTION:

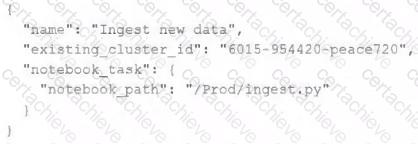

A junior data engineer has configured a workload that posts the following JSON to the Databricks REST API endpoint 2.0/jobs/create .

Assuming that all configurations and referenced resources are available, which statement describes the result of executing this workload three times?

Correct Answer & Rationale:

Answer: C

Explanation:

This is the correct answer because the JSON posted to the Databricks REST API endpoint 2.0/jobs/create defines a new job with a name, an existing cluster id, and a notebook task. However, it does not specify any schedule or trigger for the job execution. Therefore, three new jobs with the same name and configuration will be created in the workspace, but none of them will be executed until they are manually triggered or scheduled. Verified References: [Databricks Certified Data Engineer Professional], under “Monitoring and Logging” section; [Databricks Documentation], under “Jobs API - Create” section.

QUESTION DESCRIPTION:

Which statement describes the default execution mode for Databricks Auto Loader?

Correct Answer & Rationale:

Answer: A

Explanation:

Databricks Auto Loader simplifies and automates the process of loading data into Delta Lake. The default execution mode of the Auto Loader identifies new files by listing the input directory. It incrementally and idempotently loads these new files into the target Delta Lake table. This approach ensures that files are not missed and are processed exactly once, avoiding data duplication. The other options describe different mechanisms or integrations that are not part of the default behavior of the Auto Loader.

QUESTION DESCRIPTION:

A data engineer has a Delta table orders with deletion vectors enabled. The engineer executes the following command:

DELETE FROM orders WHERE status = ' cancelled ' ;

What should be the behavior of deletion vectors when the command is executed?

Correct Answer & Rationale:

Answer: D

Explanation:

Deletion vectors (DVs) in Delta Lake optimize delete operations by marking deleted rows logically in metadata rather than rewriting Parquet files. When a DELETE statement is executed, affected rows are tracked by DVs in the transaction log. The data remains in the underlying files but is filtered out during query reads. This improves performance for frequent deletes and updates since file rewrites are deferred. Physical data removal only occurs when a VACUUM command is later executed. The Databricks documentation confirms: “With deletion vectors, deleted rows are marked in metadata and skipped at read time, avoiding file rewrites.” Thus, rows are marked as deleted in metadata—not in files.

QUESTION DESCRIPTION:

A transactions table has been liquid clustered on the columns product_id, user_id, and event_date.

Which operation lacks support for cluster on write?

Correct Answer & Rationale:

Answer: A

Explanation:

Delta Lake ' s Liquid Clustering is an advanced feature that improves query performance by dynamically clustering data without requiring costly compaction steps like traditional Z-ordering.

When performing writes to a Liquid Clustered table, some write operations automatically maintain clustering, while others do not .

Explanation of Each Option:

(A) spark.writestream.format( ' delta ' ).mode( ' append ' ) (Correct Answer)

Reason: Streaming writes (writestream) do not support Liquid Clustering because streaming data arrives in micro-batches.

Since Liquid Clustering needs efficient global reorganization of files, streaming append operations don ' t provide sufficient data volume at a time to be effectively clustered.

Delta Lake documentation states that Liquid Clustering is only supported for batch writes.

(B) CTAS and RTAS statements

Reason: CREATE TABLE AS SELECT (CTAS) and REPLACE TABLE AS SELECT (RTAS) are batch operations and can enforce Liquid Clustering.

These operations create or replace a table based on a query result, and since they are batch-based, Liquid Clustering applies.

(C) INSERT INTO operations

Reason: INSERT INTO is supported for Liquid Clustering because it is a batch operation.

While it may not be as efficient as MERGE or COPY INTO, clustering is applied upon execution.

(D) spark.write.format( ' delta ' ).mode( ' append ' )

Reason: Batch append operations are supported for Liquid Clustering.

Unlike streaming append, batch writes allow the optimizer to re-cluster data efficiently.

Conclusion:

Since streaming append operations do not support Liquid Clustering , option (A) is the correct answer.

QUESTION DESCRIPTION:

A data engineer is using Lakeflow Declarative Pipelines Expectations feature to track the data quality of their incoming sensor data. Periodically, sensors send bad readings that are out of range, and they are currently flagging those rows with a warning and writing them to the silver table along with the good data. They’ve been given a new requirement – the bad rows need to be quarantined in a separate quarantine table and no longer included in the silver table.

This is the existing code for their silver table:

@dlt.table

@dlt.expect( " valid_sensor_reading " , " reading < 120 " )

def silver_sensor_readings():

return spark.readStream.table( " bronze_sensor_readings " )

What code will satisfy the requirements?

Correct Answer & Rationale:

Answer: A

Explanation:

Lakeflow Declarative Pipelines (DLT) supports data quality enforcement using @dlt.expect, @dlt.expect_or_drop, and @dlt.expect_all.

@dlt.expect applies a rule and records whether rows pass or fail the condition but does not drop failing rows . Instead, failing rows can be written to a quarantine table.

@dlt.expect_or_drop enforces that only rows passing the condition flow downstream, dropping bad records automatically.

In this case, the requirement is:

Good rows (reading < 120) go to the silver table .

Bad rows (reading > = 120) go to a quarantine table .

Bad rows should not be included in silver.

The correct implementation is Option A , where:

The silver table uses @dlt.expect to validate reading < 120. These rows flow normally.

The quarantine table applies an expectation for reading > = 120, ensuring bad records are captured separately.

Other options are incorrect:

Option B/D: These either use expect_or_drop incorrectly or apply wrong conditions, leading to dropped rows without quarantining properly.

Option C: Uses expect_or_drop for both tables, which would discard bad rows instead of persisting them into a quarantine table.

Thus, Option A meets the business requirement to split good and bad data streams while ensuring both are captured for auditing and processing.

QUESTION DESCRIPTION:

Two of the most common data locations on Databricks are the DBFS root storage and external object storage mounted with dbutils.fs.mount().

Which of the following statements is correct?

Correct Answer & Rationale:

Answer: A

Explanation:

DBFS is a file system protocol that allows users to interact with files stored in object storage using syntax and guarantees similar to Unix file systems 1 . DBFS is not a physical file system, but a layer over the object storage that provides a unified view of data across different data sources 1 . By default, the DBFS root is accessible to all users in the workspace, and the access to mounted data sources depends on the permissions of the storage account or container 2 . Mounted storage volumes do not need to have full public read and write permissions, but they do require a valid connection string or access key to be provided when mounting 3 . Both the DBFS root and mounted storage can be accessed when using %sh in a Databricks notebook, as long as the cluster has FUSE enabled 4 . The DBFS root does not store files in ephemeral block volumes attached to the driver, but in the object storage associated with the workspace 1 . Mounted directories will persist saved data to external storage between sessions, unless they are unmounted or deleted 3 . References: DBFS , Work with files on Azure Databricks , Mounting cloud object storage on Azure Databricks , Access DBFS with FUSE

QUESTION DESCRIPTION:

A data team is automating a daily multi-task ETL pipeline in Databricks. The pipeline includes a notebook for ingesting raw data, a Python wheel task for data transformation, and a SQL query to update aggregates. They want to trigger the pipeline programmatically and see previous runs in the GUI. They need to ensure tasks are retried on failure and stakeholders are notified by email if any task fails.

Which two approaches will meet these requirements? (Choose 2 answers)

Correct Answer & Rationale:

Answer: B, C

Explanation:

Databricks Jobs supports defining multi-task workflows that include notebooks, SQL statements, and Python wheel tasks. These can be configured with retry policies, dependency chains, and failure notifications. The correct practice, as stated in the documentation, is to use the Jobs REST API (/jobs/create) or Databricks Asset Bundles to define multi-task jobs, and then trigger them programmatically using /jobs/run-now, CLI, or SDK. This allows the team to maintain full job history, handle retries automatically, and receive alerts via configured email notifications. Using /jobs/runs/submit creates one-off ad hoc runs without maintaining dependency visibility. Therefore, options B and C together satisfy the operational, automation, and governance requirements.

A Stepping Stone for Enhanced Career Opportunities

Your profile having Databricks Certification certification significantly enhances your credibility and marketability in all corners of the world. The best part is that your formal recognition pays you in terms of tangible career advancement. It helps you perform your desired job roles accompanied by a substantial increase in your regular income. Beyond the resume, your expertise imparts you confidence to act as a dependable professional to solve real-world business challenges.

Your success in Databricks Databricks-Certified-Professional-Data-Engineer certification exam makes your visible and relevant in the fast-evolving tech landscape. It proves a lifelong investment in your career that give you not only a competitive advantage over your non-certified peers but also makes you eligible for a further relevant exams in your domain.

What You Need to Ace Databricks Exam Databricks-Certified-Professional-Data-Engineer

Achieving success in the Databricks-Certified-Professional-Data-Engineer Databricks exam requires a blending of clear understanding of all the exam topics, practical skills, and practice of the actual format. There's no room for cramming information, memorizing facts or dependence on a few significant exam topics. It means your readiness for exam needs you develop a comprehensive grasp on the syllabus that includes theoretical as well as practical command.

Here is a comprehensive strategy layout to secure peak performance in Databricks-Certified-Professional-Data-Engineer certification exam:

- Develop a rock-solid theoretical clarity of the exam topics

- Begin with easier and more familiar topics of the exam syllabus

- Make sure your command on the fundamental concepts

- Focus your attention to understand why that matters

- Ensure hands-on practice as the exam tests your ability to apply knowledge

- Develop a study routine managing time because it can be a major time-sink if you are slow

- Find out a comprehensive and streamlined study resource for your help

Ensuring Outstanding Results in Exam Databricks-Certified-Professional-Data-Engineer!

In the backdrop of the above prep strategy for Databricks-Certified-Professional-Data-Engineer Databricks exam, your primary need is to find out a comprehensive study resource. It could otherwise be a daunting task to achieve exam success. The most important factor that must be kep in mind is make sure your reliance on a one particular resource instead of depending on multiple sources. It should be an all-inclusive resource that ensures conceptual explanations, hands-on practical exercises, and realistic assessment tools.

Certachieve: A Reliable All-inclusive Study Resource

Certachieve offers multiple study tools to do thorough and rewarding Databricks-Certified-Professional-Data-Engineer exam prep. Here's an overview of Certachieve's toolkit:

Databricks Databricks-Certified-Professional-Data-Engineer PDF Study Guide

This premium guide contains a number of Databricks Databricks-Certified-Professional-Data-Engineer exam questions and answers that give you a full coverage of the exam syllabus in easy language. The information provided efficiently guides the candidate's focus to the most critical topics. The supportive explanations and examples build both the knowledge and the practical confidence of the exam candidates required to confidently pass the exam. The demo of Databricks Databricks-Certified-Professional-Data-Engineer study guide pdf free download is also available to examine the contents and quality of the study material.

Databricks Databricks-Certified-Professional-Data-Engineer Practice Exams

Practicing the exam Databricks-Certified-Professional-Data-Engineer questions is one of the essential requirements of your exam preparation. To help you with this important task, Certachieve introduces Databricks Databricks-Certified-Professional-Data-Engineer Testing Engine to simulate multiple real exam-like tests. They are of enormous value for developing your grasp and understanding your strengths and weaknesses in exam preparation and make up deficiencies in time.

These comprehensive materials are engineered to streamline your preparation process, providing a direct and efficient path to mastering the exam's requirements.

Databricks Databricks-Certified-Professional-Data-Engineer exam dumps

These realistic dumps include the most significant questions that may be the part of your upcoming exam. Learning Databricks-Certified-Professional-Data-Engineer exam dumps can increase not only your chances of success but can also award you an outstanding score.

Databricks Databricks-Certified-Professional-Data-Engineer Databricks Certification FAQ

There are only a formal set of prerequisites to take the Databricks-Certified-Professional-Data-Engineer Databricks exam. It depends of the Databricks organization to introduce changes in the basic eligibility criteria to take the exam. Generally, your thorough theoretical knowledge and hands-on practice of the syllabus topics make you eligible to opt for the exam.

It requires a comprehensive study plan that includes exam preparation from an authentic, reliable and exam-oriented study resource. It should provide you Databricks Databricks-Certified-Professional-Data-Engineer exam questions focusing on mastering core topics. This resource should also have extensive hands on practice using Databricks Databricks-Certified-Professional-Data-Engineer Testing Engine.

Finally, it should also introduce you to the expected questions with the help of Databricks Databricks-Certified-Professional-Data-Engineer exam dumps to enhance your readiness for the exam.

Like any other Databricks Certification exam, the Databricks Certification is a tough and challenging. Particularly, it's extensive syllabus makes it hard to do Databricks-Certified-Professional-Data-Engineer exam prep. The actual exam requires the candidates to develop in-depth knowledge of all syllabus content along with practical knowledge. The only solution to pass the exam on first try is to make sure diligent study and lab practice prior to take the exam.

The Databricks-Certified-Professional-Data-Engineer Databricks exam usually comprises 100 to 120 questions. However, the number of questions may vary. The reason is the format of the exam that may include unscored and experimental questions sometimes. Mostly, the actual exam consists of various question formats, including multiple-choice, simulations, and drag-and-drop.

It actually depends on one's personal keenness and absorption level. However, usually people take three to six weeks to thoroughly complete the Databricks Databricks-Certified-Professional-Data-Engineer exam prep subject to their prior experience and the engagement with study. The prime factor is the observation of consistency in studies and this factor may reduce the total time duration.

Yes. Databricks has transitioned to v1.1, which places more weight on Network Automation, Security Fundamentals, and AI integration. Our 2026 bank reflects these specific updates.

Standard dumps rely on pattern recognition. If Databricks changes a single IP address in a topology, memorized answers fail. Our rationales teach you the logic so you can solve the problem regardless of the phrasing.

Top Exams & Certification Providers

New & Trending

- New Released Exams

- Related Exam

- Hot Vendor