The Google Professional Machine Learning Engineer (Professional-Machine-Learning-Engineer)

Passing Google Machine Learning Engineer exam ensures for the successful candidate a powerful array of professional and personal benefits. The first and the foremost benefit comes with a global recognition that validates your knowledge and skills, making possible your entry into any organization of your choice.

Why CertAchieve is Better than Standard Professional-Machine-Learning-Engineer Dumps

In 2026, Google uses variable topologies. Basic dumps will fail you.

| Quality Standard | Generic Dump Sites | CertAchieve Premium Prep |

|---|---|---|

| Technical Explanation | None (Answer Key Only) | Step-by-Step Expert Rationales |

| Syllabus Coverage | Often Outdated (v1.0) | 2026 Updated (Latest Syllabus) |

| Scenario Mastery | Blind Memorization | Conceptual Logic & Troubleshooting |

| Instructor Access | No Post-Sale Support | 24/7 Professional Help |

Success backed by proven exam prep tools

Real exam match rate reported by verified users

Consistently high performance across certifications

Efficient prep that reduces study hours significantly

Google Professional-Machine-Learning-Engineer Exam Domains Q&A

Certified instructors verify every question for 100% accuracy, providing detailed, step-by-step explanations for each.

QUESTION DESCRIPTION:

You work for a company that captures live video footage of checkout areas in their retail stores You need to use the live video footage to build a mode! to detect the number of customers waiting for service in near real time You want to implement a solution quickly and with minimal effort How should you build the model?

Correct Answer & Rationale:

Answer: A

Explanation:

According to the official exam guide 1 , one of the skills assessed in the exam is to “design, build, and productionalize ML models to solve business challenges using Google Cloud technologies”. The Vertex AI Vision Occupancy Analytics model 2 is a specialized pre-built vision model that lets you count people or vehicles given specific inputs you add in video frames. It provides advanced features such as active zones counting, line crossing counting, and dwelling detection. This model is suitable for the use case of detecting the number of customers waiting for service in near real time. You can easily create and deploy an occupancy analytics application using Vertex AI Vision 3 . The other options are not relevant or optimal for this scenario. References :

Professional ML Engineer Exam Guide

Occupancy analytics guide

Create an occupancy analytics app with BigQuery forecasting

Google Professional Machine Learning Certification Exam 2023

Latest Google Professional Machine Learning Engineer Actual Free Exam Questions

QUESTION DESCRIPTION:

You are training an ML model using data stored in BigQuery that contains several values that are considered Personally Identifiable Information (Pll). You need to reduce the sensitivity of the dataset before training your model. Every column is critical to your model. How should you proceed?

Correct Answer & Rationale:

Answer: B

Explanation:

The best option for reducing the sensitivity of the dataset before training the model is to use the Cloud Data Loss Prevention (DLP) API to scan for sensitive data, and use Dataflow with the DLP API to encrypt sensitive values with Format Preserving Encryption. This option allows you to keep every column in the dataset, while protecting the sensitive data from unauthorized access or exposure. The Cloud DLP API can detect and classify various types of sensitive data, such as names, email addresses, phone numbers, credit card numbers, and more 1 . Dataflow can create scalable and reliable pipelines to process large volumes of data from BigQuery and other sources 2 . Format Preserving Encryption (FPE) is a technique that encrypts sensitive data while preserving its original format and length, which can help maintain the utility and validity of the data 3 . By using Dataflow with the DLP API, you can apply FPE to the sensitive values in the dataset, and store the encrypted data in BigQuery or another destination. Yo u can also use the same pipeline to decrypt the data when needed, by using the same encryption key and method 4 .

The other options are not as suitable as option B, for the following reasons:

Option A: Using Dataflow to ingest the columns with sensitive data from BigQuery, and then randomize the values in each sensitive column, would reduce the sensitivity of the data, but also the utility and accuracy of the data. Randomization is a technique that replaces sensitive data with random values, which can prevent re-identification of t he data, but also distort the distribution and relationships of the data 3 . This can affect the performance and quality of the ML model, especially if every column is critical to the model.

Option C: Using the Cloud DLP API to scan for sensitive data, and use Dataflow to replace all sensitive data by using the encryption algorithm AES-256 with a salt, would reduce the sensitivity of the data, but also the utility and validity of the data. AES-256 is a symmetric encryption algorithm that uses a 256-bit key to encrypt and decrypt data. A salt is a random value that is added to the data before encryption, to increase the randomness and security of the encrypted data. However, AES-256 does not preserve the format or length of the original data, which can cause problems when storing or processing the data. For example, if the original data is a 10-digit phone number, AES-256 would produce a much longer and different string, which can break the schema or logic of the dataset 3 .

Option D: Before training, using BigQuery to select only the columns that do not contain sensitive data, and creating an authorized view of the data so that sensitive values cannot be accessed by unauthorized individuals, would reduce the exposure of the sensitive data, but also the completeness and relevance of the data. An authorized view is a BigQuery view that allows you to share query results with particular users or groups, without giving them access to the underlying tables. However, this option assumes that you can identify the columns that do not contain sensitive data, which may not be easy or accurate. Moreover, this option would remove some columns from the dataset, which can affect the performance and quality of the ML model, especially if every column is critical to the model.

QUESTION DESCRIPTION:

You need to use TensorFlow to train an image classification model. Your dataset is located in a Cloud Storage directory and contains millions of labeled images Before training the model, you need to prepare the data. You want the data preprocessing and model training workflow to be as efficient scalable, and low maintenance as possible. What should you do?

Correct Answer & Rationale:

Answer: A

Explanation:

TFRecord is a binary file format that stores your data as a sequence of binary strings 1 . TFRecord files are efficient, scalable, and easy to process 1 . Sharding is a technique that splits a large file into smaller files, which can improve parallelism and perfo rmance 2 . Dataflow is a service that allows you to create and run data processing pipelines on G oogle Cloud 3 . Dataflow can create sharded TFRecord files from your images in a Cloud Storage directory 4 .

tf.data.TFRecordDataset is a class that allows you to read and parse TFRecord files in TensorFlow. You can use this class to create a tf.data.Dataset object that represents your input data for training. tf.data.Dataset is a high-level API that provides various methods to transform, batch, shuffle, and prefetch your data.

Vertex AI Training is a service that allows you to train your custom models on Google Cloud using various hardware accelerators, such as GPUs. Vertex AI Training supports TensorFlow models and can read data from Cloud Storage. You can use Vertex AI Training to train your image classification model by using a V100 GPU, which is a powerful and fast GPU for deep learning.

QUESTION DESCRIPTION:

Your data science team needs to rapidly experiment with various features, model architectures, and hyperparameters. They need to track the accuracy metrics for various experiments and use an API to query the metrics over time. What should they use to track and report their experiments while minimizing manual effort?

Correct Answer & Rationale:

Answer: C

Explanation:

AI Platform Training is a service that allows you to run your machine learning experiments on Google Cloud using various features, model architectures, and hyperparameters. You can use AI Platform Training to scale up your experiments, leverage distributed training, and access specialized hardware such as GPUs and TPUs 1 . Cloud Monitoring is a service that collects and analyzes metrics, logs, and traces from Google Cloud, AWS, and other sources. You can use Cloud Monitoring to create dashboards, alerts, and reports based on your data 2 . The Monitoring API is an interface that allows you to programmatically access and manipulate your monitoring data 3 .

By using AI Platform Training and Cloud Monitoring, you can track and report your experiments while minimizing manual effort. You can write the accuracy metrics from your experiments to Cloud Monitoring usin g the AI Platform Training Python package 4 . You can then query the results using the Monitoring API and compare the performance of different experiments. You can also visualize the metrics in the Cloud Console or create custom dashboards and alerts 5 . Therefore, using AI Platform Training and Cloud Monitoring is the best option for this use case.

QUESTION DESCRIPTION:

One of your models is trained using data provided by a third-party data broker. The data broker does not reliably notify you of formatting changes in the data. You want to make your model training pipeline more robust to issues like this. What should you do?

Correct Answer & Rationale:

Answer: A

Explanation:

TensorFlow Data Validation (TFDV) is a library that helps you understand, validate, and monitor your data for machine learning. It can automatically detect and report schema anomalies, such as missing features, new features, or different data types, in your data. It can also generate descriptive statistics and data visualizations to help you explore and debug your data. TFDV can be integrated with your model training pipeline to ensure data quality and consistency throughout the machine learning lifecycle. References :

TensorFlow Data Validation

Data Validation | TensorFlow

Data Validation | Machin e Learning Crash Course | Google Developers

QUESTION DESCRIPTION:

You are creating a retraining policy for a customer churn prediction model deployed in Vertex AI. New training data is added weekly. You want to implement a model retraining process that minimizes cost and effort. What should you do?

Correct Answer & Rationale:

Answer: D

Explanation:

In the context of MLOps on Google Cloud and Vertex AI, the goal is to balance model performance with operational efficiency. Here is why Option D is the correct strategy for minimizing cost and effort while maintaining reliability:

Data Drift and Model Decay: In production environments, the distribution of live data often changes over time (a phenomenon known as Training-Serving Skew or Data Drift ). If the customer attributes in the real world no longer match the data the model was trained on, the model’s predictive power will degrade.

Vertex AI Model Monitoring: Vertex AI provides built-in tools to monitor for Feature Attribution Drift and Training-Serving Skew . By setting up alerts for these shifts, you implement " Performance-based " or " Condition-based " retraining. This is more cost-effective than retraining every week (Option C), which might use expensive compute resources to retrain a model that is still performing perfectly.

Why other options are incorrect:

Option A: Latency is an infrastructure/engineering metric, not a predictive quality metric. Retraining the model will not fix latency issues caused by high traffic; that would require scaling your prediction nodes.

Option B: While accuracy is important, waiting for a 10% drop on a new dataset often means the model has already been underperforming in production for some time. Furthermore, calculating accuracy requires " ground truth " (actual labels), which may not be available immediately for churn.

Option C: Retraining weekly regardless of performance leads to unnecessary compute costs and engineering overhead if the data hasn ' t changed significantly.

QUESTION DESCRIPTION:

Your team needs to build a model that predicts whether images contain a driver ' s license, passport, or credit card. The data engineering team already built the pipeline and generated a dataset composed of 10,000 images with driver ' s licenses, 1,000 images with passports, and 1,000 images with credit cards. You now have to train a model with the following label map: [ ' driversjicense ' , ' passport ' , ' credit_card ' ]. Which loss function should you use?

Correct Answer & Rationale:

Answer: C

Explanation:

Categorical cross-entropy is a loss function that is suitable for multi-class classification problems, where the target variable has more than two possible values. Categorical cross-entropy measures the difference between the true probability distribution of the target classes and the predicted probability distribution of the model. It is defined as:

L = - sum(y_i * log(p_i))

where y_i is the true probability of class i, and p_i is the predicted probability of class i. Categorical cross-entropy penalizes the model for making incorrect predictions, and encourages the model to assign high probabilities to the correct classes and low probabilities to the incorrect classes.

For the use case of building a model that predicts whether images contain a driver’s license, passport, or credit card, categorical cross-entropy is the appropriate loss function to use. This is because the problem is a multi-class classification problem, where the target variable has three possible values: [‘drivers_license’, ‘passport’, ‘credit_card’]. The label map is a list that maps the class names to the class indices, such that ‘drivers_license’ corresponds to index 0, ‘passport’ corresponds to index 1, and ‘credit_card’ corresponds to index 2. The model should output a probability distribution over the three classes for each image, and the categorical cross-entropy loss function should compare the output with the true labels. Therefore, categorical cross-entropy is the best loss function for this use case.

QUESTION DESCRIPTION:

You are developing a recommendation engine for an online clothing store. The historical customer transaction data is stored in BigQuery and Cloud Storage. You need to perform exploratory data analysis (EDA), preprocessing and model training. You plan to rerun these EDA, preprocessing, and training steps as you experiment with different types of algorithms. You want to minimize the cost and development effort of running these steps as you experiment. How should you configure the environment?

Correct Answer & Rationale:

Answer: A

Explanation:

Cost-effectiveness: User-managed notebooks in Vertex AI Workbench allow you to leverage pre-configured virtual machines with reasonable resource allocation, keeping costs lower compared to options involving managed notebooks or Dataproc clusters.

Development flexibility: User-managed notebooks offer full control over the environment, allowing you to install additional libraries or dependencies needed for your specific EDA, preprocessing, and model training tasks. This flexibility is crucial while experimenting with different algorithms.

BigQuery integration: The %%bigquery magic commands provide seamless integration with BigQuery within the Jupyter Notebook environment. This enables efficient querying and exploration of customer transaction data stored in BigQuery directly from the notebook, streamlining the workflow.

Other options and why they are not the best fit:

B. Managed notebook: While managed notebooks offer an easier setup, they might have limited customization options, potentially hindering your ability to install specific libraries or tools.

C. Dataproc Hub: Dataproc Hub focuses on running large-scale distributed workloads, and it might be overkill for your scenario involving exploratory analysis and experimentation with different algorithms. Additionally, it could incur higher costs compared to a user-managed notebook.

D. Dataproc cluster with spark-bigquery-connector: Similar to option C, using a Dataproc cluster with the spark-bigquery-connector would be more complex and potentially more expensive than using %%bigquery magic commands within a user-managed notebook for accessing BigQuery data.

QUESTION DESCRIPTION:

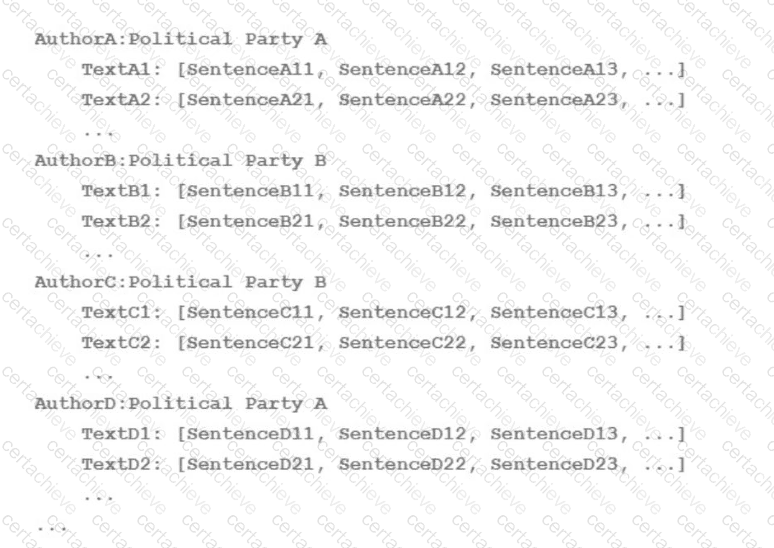

Your team is working on an NLP research project to predict political affiliation of authors based on articles they have written. You have a large training dataset that is structured like this:

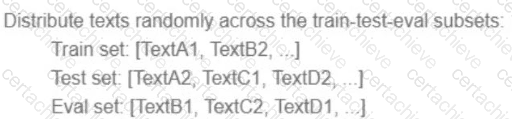

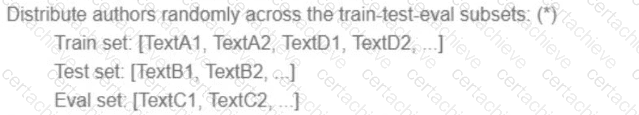

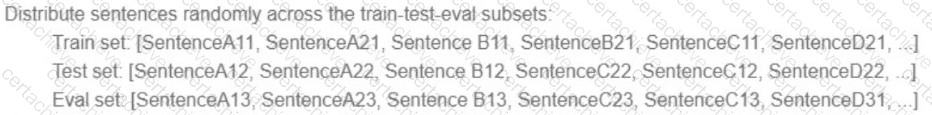

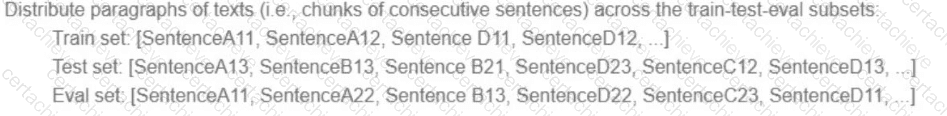

You followed the standard 80%-10%-10% data distribution across the training, testing, and evaluation subsets. How should you distribute the training examples across the train-test-eval subsets while maintaining the 80-10-10 proportion?

A)

B)

C)

D)

Correct Answer & Rationale:

Answer: C

Explanation:

The best way to distribute the training examples across the train-test-eval subsets while maintaining the 80-10-10 proportion is to use option C. This option ensures that each subset contains a balanced and representative sample of the different classes (Democrat and Republican) and the different authors. This way, the model can learn from a diverse and comprehensive set of articles and avoid overfitting or underfitting. Option C also avoids the problem of data leakage, which occurs when the same author appears in more than one subset, potentially biasing the model and inflating its performance. Therefore, option C is the most suitable technique for this use case.

QUESTION DESCRIPTION:

Your company manages a video sharing website where users can watch and upload videos. You need to

create an ML model to predict which newly uploaded videos will be the most popular so that those videos can be prioritized on your company’s website. Which result should you use to determine whether the model is successful?

Correct Answer & Rationale:

Answer: C

Explanation:

In this scenario, the goal is to create an ML model to predict which newly uploaded videos will be the most popular on a video sharing website. The result that should be used to determine whether the model is successful is the one that best aligns with the business objective and the evaluation metric. Option C is the correct answer because it defines the most popular videos as the ones that have the highest watch time within 30 days of being uploaded, and it sets a high accuracy threshold of 95% for the model prediction.

Option C: The model predicts 95% of the most popular videos measured by watch time within 30 days of being uploaded. This option is the best result for the scenario because it reflects the business objective and the evaluation metric. The business objective is to prioritize the videos that will attract and retain the most viewers on the website. The watch time is a good indicator of the viewer engagement and satisfaction, as it measures how long the viewers watch the videos. The 30-day window is a reasonable time frame to capture the popularity trend of the videos, as it accounts for the initial interest and the viral potential of the videos. The 95% accuracy threshold is a high standard for the model prediction, as it means that the model can correctly identify 95 out of 100 of the most popular videos based on the watch time metric.

Option A: The model predicts videos as popular if the user who uploads them has over 10,000 likes. This option is not a good result for the scenario because it does not reflect the business objective or the evaluation metric. The business objective is to prioritize the videos that will be the most popular on the website, not the users who upload them. The number of likes that a user has is not a good indicator of the popularity of their videos, as it does not measure the viewer engagement or satisfaction with the videos. Moreover, this option does not specify a time frame or an accuracy threshold for the model prediction, making it vague and unreliable.

Option B: The model predicts 97.5% of the most popular clickbait videos measured by number of clicks. This option is not a good result for the scenario because it does not reflect the business objective or the evaluation metric. The business objective is to prioritize the videos that will be the most popular on the website, not the videos that have the most misleading or sensational titles or thumbnails. The number of clicks that a video has is not a good indicator of the popularity of the video, as it does not measure the viewer engagement or satisfaction with the video content. Moreover, this option only focuses on the clickbait videos, which may not represent the majority or the diversity of the videos on the website.

Option D: The Pearson correlation coefficient between the log-transformed number of views after 7 days and 30 days after publication is equal to 0. This option is not a good result for the scenario because it does not reflect the business objective or the evaluation metric. The business objective is to prioritize the videos that will be the most popular on the website, not the videos that have the most consistent or inconsistent number of views over time. The Pearson correlation coefficient is a metric that measures the linear relationship between two variables, not the popularity of the videos. A correlation coefficient of 0 means that there is no linear relationship between the log-transformed number of views after 7 days and 30 days, which does not indicate whether the videos are popular or not. Moreover, this option does not specify a threshold or a target value for the correlation coefficient, making it meaningless and irrelevant.

A Stepping Stone for Enhanced Career Opportunities

Your profile having Machine Learning Engineer certification significantly enhances your credibility and marketability in all corners of the world. The best part is that your formal recognition pays you in terms of tangible career advancement. It helps you perform your desired job roles accompanied by a substantial increase in your regular income. Beyond the resume, your expertise imparts you confidence to act as a dependable professional to solve real-world business challenges.

Your success in Google Professional-Machine-Learning-Engineer certification exam makes your visible and relevant in the fast-evolving tech landscape. It proves a lifelong investment in your career that give you not only a competitive advantage over your non-certified peers but also makes you eligible for a further relevant exams in your domain.

What You Need to Ace Google Exam Professional-Machine-Learning-Engineer

Achieving success in the Professional-Machine-Learning-Engineer Google exam requires a blending of clear understanding of all the exam topics, practical skills, and practice of the actual format. There's no room for cramming information, memorizing facts or dependence on a few significant exam topics. It means your readiness for exam needs you develop a comprehensive grasp on the syllabus that includes theoretical as well as practical command.

Here is a comprehensive strategy layout to secure peak performance in Professional-Machine-Learning-Engineer certification exam:

- Develop a rock-solid theoretical clarity of the exam topics

- Begin with easier and more familiar topics of the exam syllabus

- Make sure your command on the fundamental concepts

- Focus your attention to understand why that matters

- Ensure hands-on practice as the exam tests your ability to apply knowledge

- Develop a study routine managing time because it can be a major time-sink if you are slow

- Find out a comprehensive and streamlined study resource for your help

Ensuring Outstanding Results in Exam Professional-Machine-Learning-Engineer!

In the backdrop of the above prep strategy for Professional-Machine-Learning-Engineer Google exam, your primary need is to find out a comprehensive study resource. It could otherwise be a daunting task to achieve exam success. The most important factor that must be kep in mind is make sure your reliance on a one particular resource instead of depending on multiple sources. It should be an all-inclusive resource that ensures conceptual explanations, hands-on practical exercises, and realistic assessment tools.

Certachieve: A Reliable All-inclusive Study Resource

Certachieve offers multiple study tools to do thorough and rewarding Professional-Machine-Learning-Engineer exam prep. Here's an overview of Certachieve's toolkit:

Google Professional-Machine-Learning-Engineer PDF Study Guide

This premium guide contains a number of Google Professional-Machine-Learning-Engineer exam questions and answers that give you a full coverage of the exam syllabus in easy language. The information provided efficiently guides the candidate's focus to the most critical topics. The supportive explanations and examples build both the knowledge and the practical confidence of the exam candidates required to confidently pass the exam. The demo of Google Professional-Machine-Learning-Engineer study guide pdf free download is also available to examine the contents and quality of the study material.

Google Professional-Machine-Learning-Engineer Practice Exams

Practicing the exam Professional-Machine-Learning-Engineer questions is one of the essential requirements of your exam preparation. To help you with this important task, Certachieve introduces Google Professional-Machine-Learning-Engineer Testing Engine to simulate multiple real exam-like tests. They are of enormous value for developing your grasp and understanding your strengths and weaknesses in exam preparation and make up deficiencies in time.

These comprehensive materials are engineered to streamline your preparation process, providing a direct and efficient path to mastering the exam's requirements.

Google Professional-Machine-Learning-Engineer exam dumps

These realistic dumps include the most significant questions that may be the part of your upcoming exam. Learning Professional-Machine-Learning-Engineer exam dumps can increase not only your chances of success but can also award you an outstanding score.

Google Professional-Machine-Learning-Engineer Machine Learning Engineer FAQ

There are only a formal set of prerequisites to take the Professional-Machine-Learning-Engineer Google exam. It depends of the Google organization to introduce changes in the basic eligibility criteria to take the exam. Generally, your thorough theoretical knowledge and hands-on practice of the syllabus topics make you eligible to opt for the exam.

It requires a comprehensive study plan that includes exam preparation from an authentic, reliable and exam-oriented study resource. It should provide you Google Professional-Machine-Learning-Engineer exam questions focusing on mastering core topics. This resource should also have extensive hands on practice using Google Professional-Machine-Learning-Engineer Testing Engine.

Finally, it should also introduce you to the expected questions with the help of Google Professional-Machine-Learning-Engineer exam dumps to enhance your readiness for the exam.

Like any other Google Certification exam, the Machine Learning Engineer is a tough and challenging. Particularly, it's extensive syllabus makes it hard to do Professional-Machine-Learning-Engineer exam prep. The actual exam requires the candidates to develop in-depth knowledge of all syllabus content along with practical knowledge. The only solution to pass the exam on first try is to make sure diligent study and lab practice prior to take the exam.

The Professional-Machine-Learning-Engineer Google exam usually comprises 100 to 120 questions. However, the number of questions may vary. The reason is the format of the exam that may include unscored and experimental questions sometimes. Mostly, the actual exam consists of various question formats, including multiple-choice, simulations, and drag-and-drop.

It actually depends on one's personal keenness and absorption level. However, usually people take three to six weeks to thoroughly complete the Google Professional-Machine-Learning-Engineer exam prep subject to their prior experience and the engagement with study. The prime factor is the observation of consistency in studies and this factor may reduce the total time duration.

Yes. Google has transitioned to v1.1, which places more weight on Network Automation, Security Fundamentals, and AI integration. Our 2026 bank reflects these specific updates.

Standard dumps rely on pattern recognition. If Google changes a single IP address in a topology, memorized answers fail. Our rationales teach you the logic so you can solve the problem regardless of the phrasing.

Top Exams & Certification Providers

New & Trending

- New Released Exams

- Related Exam

- Hot Vendor